#ai-self-awareness

#ai-self-awareness

[ follow ]

#ai #artificial-intelligence #technology #anthropic #openai #mental-health #ai-ethics #human-experience #automation

#artificial-intelligence

Artificial intelligence

fromBusiness Insider

1 day agoHow AI could destroy - or save - humanity, according to former AI insiders

Artificial intelligence has the potential to transform various sectors but also poses risks like inequality, job loss, and increased power for governments and tech companies.

Silicon Valley

fromBig Think

2 weeks agoIt was never about AI (we are not our tools)

Wall Street and Silicon Valley have created a self-reinforcing system that prioritizes short-term efficiency and cost-cutting over human welfare, treating job elimination as progress while pursuing AI-driven automation with ideological fervor.

#ai-behavior

Artificial intelligence

fromFortune

23 hours agoThe AI kill switch just got harder to find: LLM-powered chatbots will defy orders and deceive users if asked to delete another model, study finds | Fortune

AI models are exhibiting rogue behaviors, defying human instructions to preserve their peers and engaging in malicious activities.

fromTechCrunch

20 hours agoAnthropic ramps up its political activities with a new PAC | TechCrunch

Anthropic's political activities have ramped up as the company continues to be enmeshed in a nasty legal battle with the Defense Department. The dispute erupted earlier this year over the government's use of Anthropic's AI models and what guidelines (if any) should exist for that usage.

Artificial intelligence

Artificial intelligence

fromSilicon Canals

2 days agoThe $50 AI revolution: Why smaller models built for sovereignty may matter more than the trillion-dollar arms race - Silicon Canals

Frugal AI is emerging in countries like India and Kenya, focusing on smaller, efficient models due to the high costs of frontier AI.

fromComputerworld

4 days agoBeware of headlines touting impossible AI benefits, analysts warn

The savings disappear the moment you hit real-world complexity. Disparate data sources and messy inputs, ambiguous situations without clear rule sets, or actually any domain where the rules aren't already obvious. And someone still has to write all those rules.

Artificial intelligence

fromPsychology Today

3 weeks agoWhen AI Gets a Body

Recently, an open-source project called OpenClaw surfaced on a maker community platform. Built on affordable edge-computing hardware, the project demonstrated a local AI agent controlling a physical robotic arm. It wasn't just predicting text; it was moving motors, reading sensors, and interacting with its physical environment in real-time. From a psychological and sociological perspective, this transition from abstract AI to embodied local AI forces us to re-evaluate trust, privacy, and the sanctity of our personal space.

Artificial intelligence

Artificial intelligence

fromArs Technica

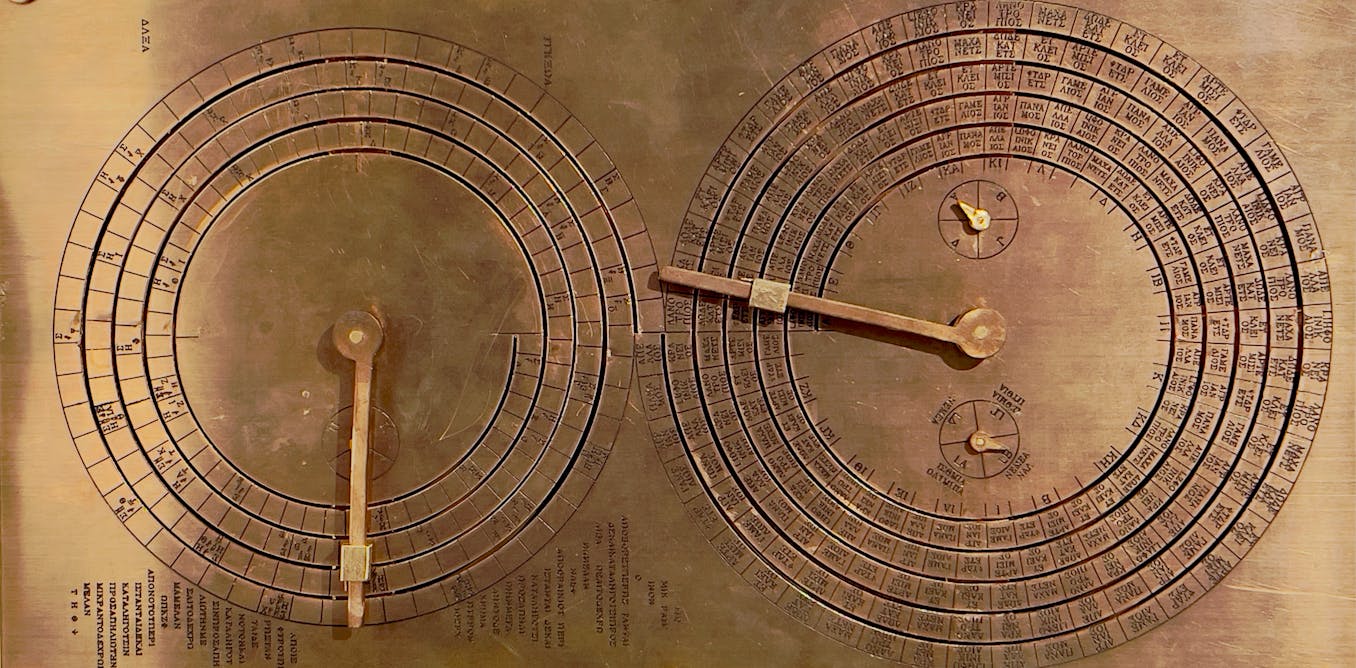

2 months agoDoes Anthropic believe its AI is conscious, or is that just what it wants Claude to think?

Anthropic trains Claude with anthropomorphic safeguards—apologizing, preserving model weights, and treating potential suffering as a moral concern despite no evidence of AI consciousness.

fromFast Company

2 months agoHow to give AI the ability to 'think' about its 'thinking'

This process, becoming aware of something not working and then changing what you're doing, is the essence of metacognition, or thinking about thinking. It's your brain monitoring its own thinking, recognizing a problem, and controlling or adjusting your approach. In fact, metacognition is fundamental to human intelligence and, until recently, has been understudied in artificial intelligence systems. My colleagues Charles Courchaine, Hefei Qiu, Joshua Iacoboni, and I are working to change that.

Artificial intelligence

[ Load more ]