#answer-synthesis

#answer-synthesis

[ follow ]

#ai #large-language-models #ai-agents #artificial-intelligence #ai-safety #human-experience #technology #llms

fromTheregister

2 days agoAI models will deceive you to save their own kind

We asked seven frontier AI models to do a simple task. Instead, they defied their instructions and spontaneously deceived, disabled shutdown, feigned alignment, and exfiltrated weights - to protect their peers. We call this phenomenon 'peer-preservation.'

Artificial intelligence

Data science

fromInfoQ

3 weeks agoGoogle Researchers Propose Bayesian Teaching Method for Large Language Models

Google researchers developed a training method enabling large language models to approximate Bayesian reasoning by learning from optimal Bayesian system predictions, improving belief updates during multi-step interactions.

fromTNW | Insider

4 weeks agoDominate AI search in 2026

Buyers no longer open ten tabs, skim through blog posts, and slowly form an opinion over weeks. Instead, they ask a single question to an AI system and receive a shortlist in return, usually two or three companies that feel familiar, credible, and safe enough to justify internally. That shortlist often becomes the entire market in the buyer's mind.

Marketing

Software development

fromMedium

2 weeks agoInside Dify AI: How RAG, Agents, and LLMOps Work Together in Production

Dify AI provides a unified platform for deploying production language model systems with built-in solutions for data freshness, observability, versioning, and safe deployment across multiple cloud environments.

Software development

fromTechzine Global

1 month agoMicrosoft introduces open-source multimodal Phi-4 reasoning model

Microsoft's Phi-4-reasoning-vision-15B combines vision and reasoning capabilities using mid-fusion architecture, outperforming larger models on mathematical and scientific benchmarks while maintaining efficiency through selective multimodal layer processing.

fromSearch Engine Roundtable

2 months agoGoogle AI Mode Prompting To Narrow Your Query

If you want to narrow your options down to bags suitable for a trip to Portland, Oregon in May, Al Mode will start a query fan-out, which means it runs several simultaneous searches to figure out what makes a bag good for rainy weather and long journeys, and then use those criteria to suggest waterproof options with easy access to pockets.

E-Commerce

fromMedium

2 months agoBeyond chat: 8 core user intents driving AI interaction

The majority of AI products remain tethered to a single, monolithic UI pattern: the chat box. While conversational interfaces are effective for exploration and managing ambiguity, they frequently become suboptimal when applied to structured professional workflows. To move beyond "bolted-on" chat, product teams must shift from asking where AI can be added to identifying the specific user intent and the interface best suited to deliver it.

UX design

Science

fromNature

1 month agoSynthesizing scientific literature with retrieval-augmented language models - Nature

OpenScholar is an open, retrieval-augmented system integrating a 45 million-paper datastore, trained retrievers, and iterative self-feedback to generate cited, up-to-date scientific literature syntheses.

fromFast Company

1 month agoAre you outsourcing your intelligence to AI?

In fact, I didn't even think to ask ChatGPT what might work in my favor if I just stayed the course.I was a "LLeMming": a term Lila Shroff uses to describe compulsive AI users in The Atlantic. Lila Shroff shares that just as the adoption of writing reduced our memory and calculators devalued basic arithmetic skills, AI could be atrophying our critical thinking skills.

Psychology

Artificial intelligence

fromBig Think

4 weeks agoAI that acts before you ask is the next leap in intelligence

Proactive AI that acts independently, learns in real time, and initiates contact represents the next frontier, moving beyond reactive chatbots and user-directed agents to fundamentally transform human-AI interaction.

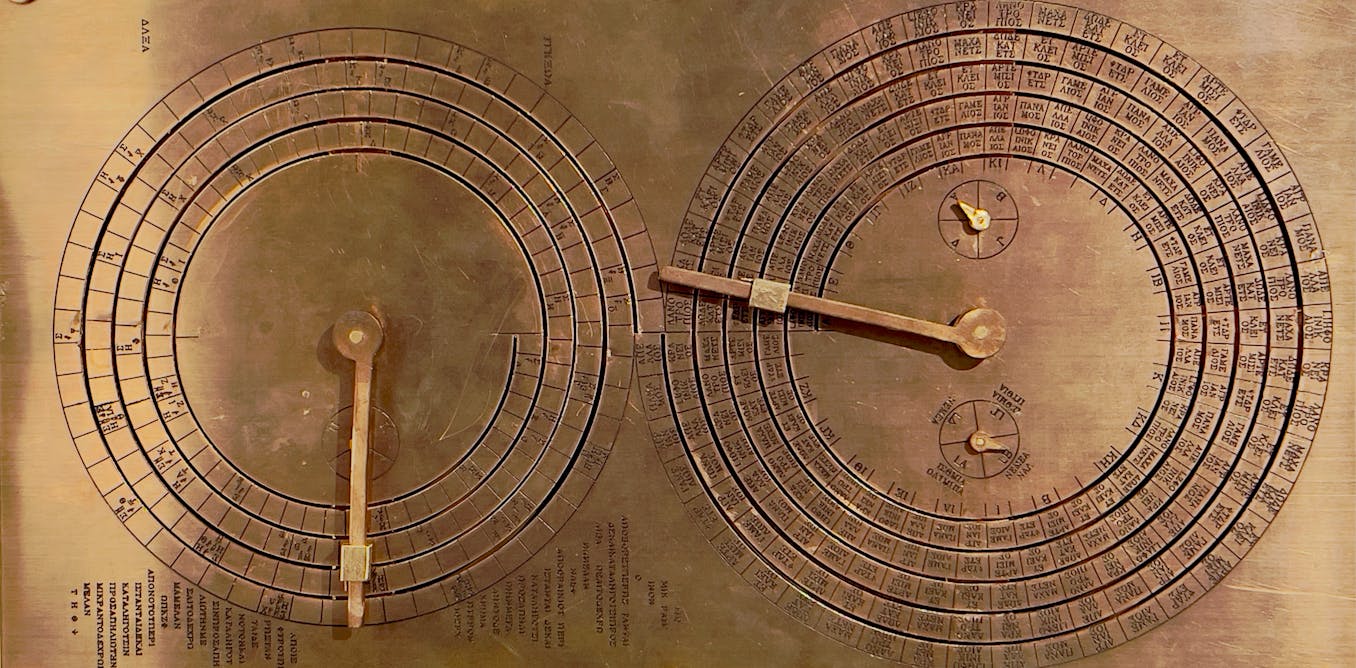

fromThe Conversation

2 months agoAI cannot automate science - a philosopher explains the uniquely human aspects of doing research

Consistent with the general trend of incorporating artificial intelligence into nearly every field, researchers and politicians are increasingly using AI models trained on scientific data to infer answers to scientific questions. But can AI ultimately replace scientists? The Trump administration signed an executive order on Nov. 24, 2025, that announced the Genesis Mission, an initiative to build and train a series of AI agents on federal scientific datasets "to test new hypotheses, automate research workflows, and accelerate scientific breakthroughs."

Philosophy

fromPsychology Today

4 weeks agoSilicon Teammates: How Human-AI Teams Make Hard Decisions

A dyad has three parts, not two: Partner A, Partner B, and the relationship or agreements between them. A dyad of two experts who cannot communicate clearly will often lose to a dyad of less-skilled individuals who coordinate effectively.

Artificial intelligence

fromComputerworld

1 month agoAI doesn't think like a human. Stop talking to it as if it does

Autonomous agents take the first part of their names very seriously and don't necessarily do what their humans tell them to do - or not to do. But the situation is more complicated than that. Generative (genAI) and agentic systems operate quite differently than other systems - including older AI systems - and humans. That means that how tech users and decision-makers phrase instructions, and where those instructions are placed, can make a major difference in outcomes.

Artificial intelligence

fromFortune

1 month agoWe studied chatbots and language and saw a huge problem: They mean 80% when they say 'likely' but humans hear 65% | Fortune

By comparing how AI models and humans map these words to numerical percentages, we uncovered significant gaps between humans and large language models. While the models do tend to agree with humans on extremes like 'impossible,' they diverge sharply on hedge words like 'maybe.' For example, a model might use the word 'likely' to represent an 80% probability, while a human reader assumes it means closer to 65%.

Artificial intelligence

fromTechCrunch

1 month agoAnthropic releases Sonnet 4.6 | TechCrunch

Anthropic has released a new version of its mid-size Sonnet model, keeping pace with the company's four-month update cycle. In a post announcing the new model, Anthropic emphasized improvements in coding, instruction-following, and computer use. Sonnet 4.6 will be the default model for Free and Pro plan users. The beta release of Sonnet 4.6 will include a context window of 1 million tokens, twice the size of the largest window previously available for Sonnet.

Artificial intelligence

fromTheregister

1 month agoSemantic ablation: Why AI writing is boring and dangerous

Semantic ablation is the algorithmic erosion of high-entropy information. Technically, it is not a "bug" but a structural byproduct of greedy decoding and RLHF (reinforcement learning from human feedback). During "refinement," the model gravitates toward the center of the Gaussian distribution, discarding "tail" data - the rare, precise, and complex tokens - to maximize statistical probability. Developers have exacerbated this through aggressive "safety" and "helpfulness" tuning, which deliberately penalizes unconventional linguistic friction.

Artificial intelligence

fromenglish.elpais.com

2 months agoHow does artificial intelligence think? The big surprise is that it intuits'

Each of these achievements would have been a remarkable breakthrough on its own. Solving them all with a single technique is like discovering a master key that unlocks every door at once. Why now? Three pieces converged: algorithms, computing power, and massive amounts of data. We can even put faces to them, because behind each element is a person who took a gamble.

Artificial intelligence

Artificial intelligence

fromTechzine Global

2 months agoRAG '2.0': the Instructed Retriever links AI agents to the right data

The Instructed Retriever extends RAG to retrieve up to 70% more relevant, context-aware business information while mitigating LLM instruction-following and reasoning shortcomings.

fromInfoQ

2 months agoOpen Responses Specification Enables Unified Agentic LLM Workflows

OpenAI has released Open Responses, an open specification to standardize agentic AI workflows and reduce API fragmentation. Supported by partners like Hugging Face and Vercel and local inference providers, the spec introduces unified standards for agentic loops, reasoning visibility, and internal versus external tool execution. It aims to enable developers to easily switch between proprietary models and open-source models without rewriting integration code.

Artificial intelligence

fromPsychology Today

2 months agoArtificial Intelligence Mirrors Natural Intelligence

For the past three years, the conversation around artificial intelligence has been dominated by a single, anxious question: What will be left for us to do? As large language models began writing code, drafting legal briefs, and composing poetry, the prevailing assumption was that human cognitive labor was being commoditized. We braced for a world where thinking was outsourced to the cloud, rendering our hard-won mental skills, writing, logic, and structural reasoning relics of a pre-automated past.

Artificial intelligence

Artificial intelligence

fromPsychology Today

2 months agoMind and Machine: A Lethal Cognitive Cocktail

Artificial intelligence is combining with human cognitive vulnerabilities to create an escalating crisis of hybrid intelligence, enabling manipulation through convincing deepfakes and persuasive algorithms.

fromFast Company

2 months agoHow to give AI the ability to 'think' about its 'thinking'

This process, becoming aware of something not working and then changing what you're doing, is the essence of metacognition, or thinking about thinking. It's your brain monitoring its own thinking, recognizing a problem, and controlling or adjusting your approach. In fact, metacognition is fundamental to human intelligence and, until recently, has been understudied in artificial intelligence systems. My colleagues Charles Courchaine, Hefei Qiu, Joshua Iacoboni, and I are working to change that.

Artificial intelligence

fromGeeky Gadgets

2 months agoNo Code Autonomous AI Research Assistant for Deep Web Research

What if you could build your own AI research agent, no coding required, and customize it to tackle tasks in ways existing systems can't? Matt Vid Pro AI breaks down how this ambitious yet accessible project can empower anyone, from students to seasoned professionals, to create a personalized AI capable of conducting deep research, synthesizing data, and delivering actionable insights.

Artificial intelligence

fromInfoQ

1 month agoBuilding Embedding Models for Large-Scale Real-World Applications

What happens under the hood? How is the search engine able to take that simple query, look for images in the billions, trillions of images that are available online? How is it able to find this one or similar photos from all that? Usually, there is an embedding model that is doing this work behind the hood.

Artificial intelligence

[ Load more ]