#application-performance

#application-performance

[ follow ]

#observability #ai #kubernetes #distributed-systems #software-development #ai-integration #devops #security

#productivity

Productivity

fromFast Company

19 hours agoMany productivity programs solve the wrong problem. This is what leaders should do instead

Organizations face work design problems rather than productivity issues, leading to temporary solutions that fail to address underlying conflicts in problem-solving approaches.

#observability

DevOps

fromNew Relic

1 month agoTitle Introducing Intelligent Workloads, Providing Business-Aligned Observability

Modern distributed systems require intelligent workload monitoring that connects technical metrics to business outcomes, replacing outdated green-light dashboards with AI-driven observability that aligns infrastructure health with revenue impact.

Web frameworks

fromMedium

2 weeks agoWhy Most Spring Boot Apps Fail in Production (7 Critical Mistakes)

Spring Boot production failures stem from seven critical mistakes including improper dependency injection, configuration errors, and resource management issues that developers can systematically avoid.

Miscellaneous

fromDevOps.com

1 month agoI Learned Traffic Optimization Before I Learned Cloud Computing. It Turns Out the Lessons Were the Same. - DevOps.com

Cloud infrastructure requires understanding system behavior and costs to operate effectively at speed, similar to how skilled drivers anticipate conditions rather than simply driving fast.

fromTechzine Global

1 day agoIGEL OS can now run AI models locally on endpoints

AI Armor provides dynamic runtime security and relies on a central policy engine in the Universal Management Suite (UMS) to meet compliance requirements, ensuring that organizations can manage their security effectively.

DevOps

Business intelligence

fromDevOps.com

1 month agoWhy OpenTelemetry Is Paving the Way for the Rise of the Observability Warehouse - DevOps.com

OpenTelemetry adoption drives observability architecture toward unified warehouse models that centralize logs, metrics, and traces for scalable, cost-effective real-time operational intelligence.

Node JS

fromThe NodeSource Blog - Node.js Tutorials, Guides, and Updates

5 months agoIntelligent Observability: How AI is Transforming Node.js Telemetry into Actionable Optimization

N|Sentinel uses AI integrated with N|Solid to detect Node.js anomalies, analyze runtime behavior, and provide real-time actionable insights to prevent performance issues.

fromRaymondcamden

1 month agoI threw thousands of files at Astro and you won't believe what happened next...

I began by creating a soft link locally from my blog's repo of posts to the src/pages/posts of a new Astro site. My blog currently has 6742 posts (all high quality I assure you). Each one looks like so: --- layout: post title: "Creating Reddit Summaries with URL Context and Gemini" date: "2026-02-09T18:00:00" categories: ["development"] tags: ["python","generative ai"] banner_image: /images/banners/cat_on_papers2.jpg permalink: /2026/02/09/creating-reddit-summaries-with-gemini description: Using Gemini APIs to create a summary of a subreddit. --- Interesting content no one will probably read here...

Austin

DevOps

fromInfoQ

2 weeks agoQCon London 2026: Uncorking Queueing Bottlenecks with OpenTelemetry

Distributed tracing with OpenTelemetry enables engineers to identify root causes across service boundaries by maintaining hierarchical visibility of operations, while SLOs based on latency provide more reliable alerting than infrastructure metrics.

fromDevOps.com

1 month agoWhat to do About AI's Forced Rethink of Reliability in Modern DevOps - DevOps.com

For years, reliability discussions have focused on uptime and whether a service met its internal SLO. However, as systems become more distributed, reliant on complex internet stacks, and integrated with AI, this binary perspective is no longer sufficient. Reliability now encompasses digital experience, speed, and business impact. For the second year in a row, The SRE Report highlights this shift.

Software development

fromDevOps.com

3 weeks agoZero Downtime Multicloud Migrations for Observability Control Planes - DevOps.com

An observability control plane isn't just a dashboard. It's the operational authority system. It defines alert rules, routing, ownership, escalation policy, and notification endpoints. When that layer is wrong, the impact is immediate. The wrong team gets paged. The right team never hears about the incident. Your service level indicators look clean while production burns.

DevOps

fromInfoQ

1 month agoDevOps Modernization: AI Agents, Intelligent Observability and Automation

Just a couple of words about today's topic. Of course, nothing surprising here, AI is changing DevOps and is changing the way teams are moving beyond reactive monitoring towards predictive automated delivery and operations. What does that mean? How can teams actually implement predictive incident detection, intelligent rollout, and AI-driven remediation? Also, how can we accelerate delivery? Those are all topics that today's panelists hopefully are going to cover.

Software development

fromDevOps.com

1 month agoHarness Readies Resilience Testing Platform to Make Applications More Robust - DevOps.com

The Harness Resilience Testing platform extends the scope of the tests provided to include application load and disaster recovery (DR) testing tools that will enable DevOps teams to further streamline workflows.

DevOps

fromDbmaestro

4 years agoWhat is Database Delivery Automation and Why Do You Need It?

Manual database deployment means longer release times. Database specialists have to spend several working days prior to release writing and testing scripts which in itself leads to prolonged deployment cycles and less time for testing. As a result, applications are not released on time and customers are not receiving the latest updates and bug fixes. Manual work inevitably results in errors, which cause problems and bottlenecks.

Software development

DevOps

fromSitePoint Forums | Web Development & Design Community

2 months agoWhat is the best way to differentiate between performance testing and a true reliability test system?

Prioritize fault tolerance before resource optimization; automate long-term reliability tests with staged, parallel, and targeted strategies to preserve CI/CD velocity.

fromNew Relic

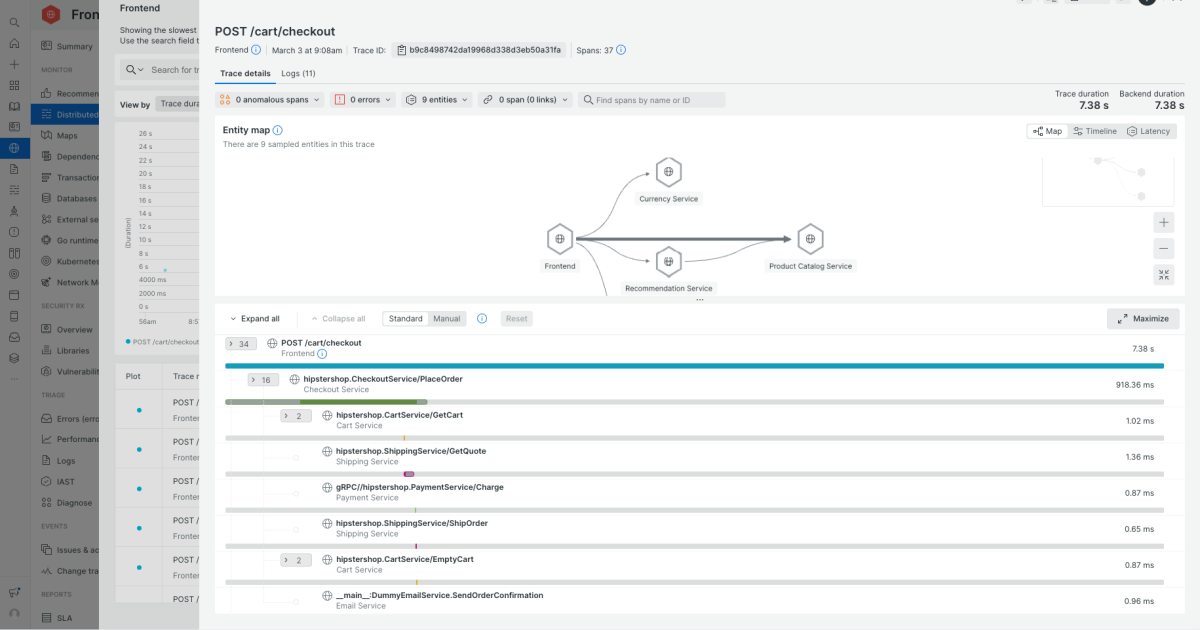

1 month ago5 Best Application Performance Monitoring Tools to Consider in 2026

Support for distributed systems. Check how well the tool handles microservices, serverless, and Kubernetes. Can you follow a request across services, queues, and third-party APIs? Does it understand pods, nodes, clusters, and autoscaling events, or does it treat everything like a static host? Correlation across metrics, logs, and traces. In an incident, you shouldn't be copying IDs between tools. Look for the ability to pivot directly from a slow trace to relevant logs,

DevOps

fromDbmaestro

5 years agoDatabase Delivery Automation in the Multi-Cloud World

The main advantage of going the Multi-Cloud way is that organizations can "put their eggs in different baskets" and be more versatile in their approach to how they do things. For example, they can mix it up and opt for a cloud-based Platform-as-a-Service (PaaS) solution when it comes to the database, while going the Software-as-a-Service (SaaS) route for their application endeavors.

DevOps

fromMedium

4 months agoCut Your Docker Build Time in Half: 6 Essential Optimization Techniques

Docker builds images in layers, caching each one.When you rebuild, Docker reuses unchanged layers to avoid re-executing steps - this is build caching. So the order of your instructions and the size of your build context have huge impact on speed and image size. Here are the quick tips to optimize and achieve 2 times faster speed building images: 1. Place least-changing instructions at the top

DevOps

[ Load more ]