#foveated-eye-tracking

#foveated-eye-tracking

[ follow ]

#smart-glasses #technology #meta #gaming #ai #ai-glasses #virtual-reality #ray-ban #augmented-reality #accessibility

fromEngadget

2 days agoSony's gaming division just bought an AI startup that turns photos into 3D volumes

Following the acquisition, the Cinemersive Labs team will join SIE's Visual Computing Group (VCG) and contribute to our broader efforts in advancing state of the art visual computing within games. This includes applying machine learning to enhance gameplay visuals, improve rendering techniques, and unlock new levels of visual fidelity for players.

Marketing tech

fromMail Online

3 days agoScientists work out why the car you just overtook seems to reappear

Dr. Conor Boland explained that red-light timing can erase small speed advantages, allowing a slower car to catch up again and again. He noted, 'You pass a car, and then a few minutes later, it ends up beside you again.' This phenomenon is partly psychological, as we remember surprising moments when the same car shows up again, but it is also built into how traffic works.

Psychology

fromMail Online

5 days agoWhat colour are the dots in this optical illusion?

'In this paper a novel optical illusion is described in which purple structures (dots) are perceived as purple at the point of fixation, while the surrounding structures (dots) of the same purple colour are perceived toward a blue hue.'

Science

Video games

fromFuturism

2 weeks agoNvidia Ridiculed for "Sloptracing" Feature That Uses AI to Yassify Video Games in Real Time

Nvidia's DLSS 5 AI upscaling feature received overwhelmingly negative backlash from gamers and developers who criticized it for distorting game art styles rather than enhancing visual clarity.

#digital-eye-strain

Health

fromEmployee Benefit News

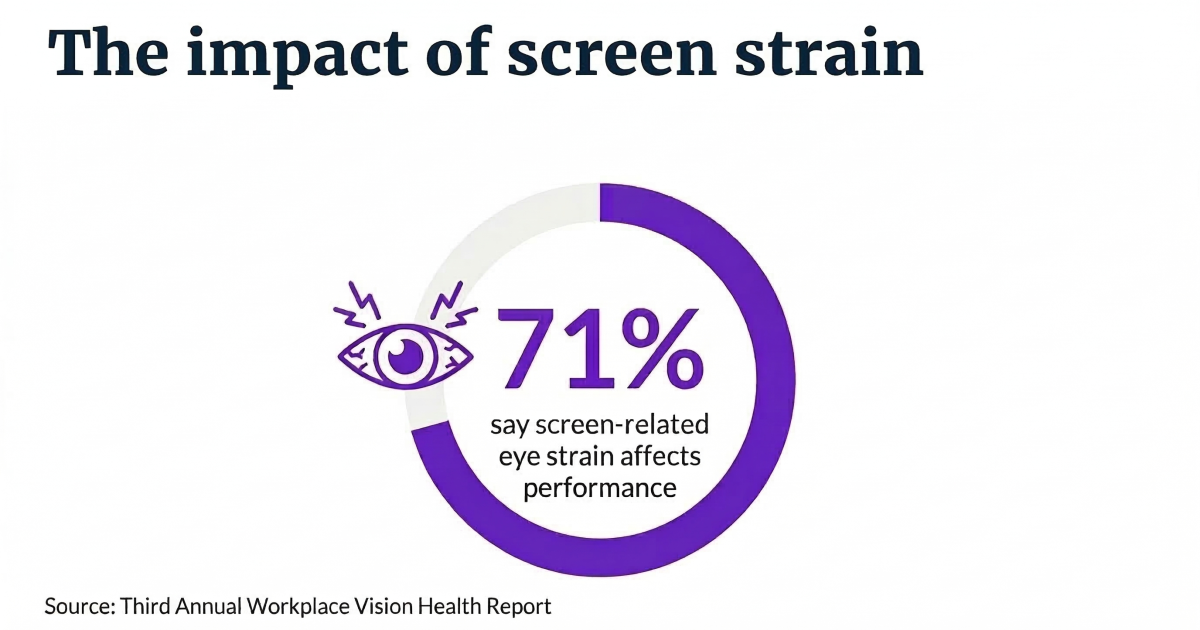

2 weeks agoScreen time surges for desk workers, straining eyes and productivity

Desk workers average 99.2 hours of screen time weekly, causing eye strain that reduces productivity by nearly one full day per week, prompting employers to reassess health and benefits strategies.

Philosophy

fromThe Conversation

2 weeks agoHuman vision: what we actually see - and don't see - tells us a lot about consciousness

Significant visual processing occurs unconsciously in the brain, as demonstrated by blindsight and inattentional blindness phenomena where people perceive visual information without conscious awareness.

fromWIRED

3 weeks agoThis Digital Picture Frame Wants to Bring People Closer to a Holographic Future

Upload any picture or video, and Musubi uses artificial intelligence to extract the most important part and hover it in space as a 3D image within the frame. That could be a video of a child's first steps or a snapshot of a birthday party. The image will be displayed in 3D form, viewable in all its holographic glory across nearly 170 degrees.

Gadgets

Mental health

fromBusiness Insider

3 weeks agoUsing too many AI tools at once can actually make you less productive and cause 'brain fry,' study finds

Workers using multiple AI tools simultaneously experience mental fatigue called 'AI brain fry,' characterized by cognitive fog and reduced decision-making ability beyond optimal tool usage levels.

fromdesignboom | architecture & design magazine

4 days agobody agency and the ways wearable devices let people regain control of their physical forms

Body agency is a power returned after an incident took it away from the user's physical form, and some wearable devices and technologies have this exact goal in mind.

Wearables

fromEngadget

3 weeks agoLooking Glass' Musubi showcases its holographic display in a consumer-friendly package

Looking Glass has been doggedly committed to making holographic displays the next big thing since 2019, and with its new Musubi digital photo frame, it might finally be offering its tech at a price that's hard to deny. Musubi is scheduled to start shipping in June, and unlike the company's previous, more developer-focused kits, the company's new display only costs $149.

Gadgets

fromwww.theguardian.com

1 month agoCan you solve it? You won't believe these optical illusions!

The illusion is the latest masterpiece from Olivier Redon, a French-American inventor, who has had his creations used in museums and on TV programmes around the world. For today's puzzles, I present five of Redon's most brilliant images. The challenge is to figure out how he managed to create them.

Photography

Psychology

fromSilicon Canals

1 month agoThe reason most people feel drained after video calls but not after phone calls has less to do with screen fatigue and more to do with the fact that seeing your own face continuously activates self-monitoring circuits your brain was never designed to sustain - Silicon Canals

Video call fatigue stems from continuous self-viewing triggering constant self-evaluation, not primarily from screen time or blue light exposure.

Software development

fromHarvard Business Review

1 month agoWhen Using AI Leads to "Brain Fry"

Steve Yegge launched Gas Town, an open-source platform enabling simultaneous orchestration of multiple Claude Code agents for rapid software development, though users report the speed creates cognitive overload.

fromMail Online

2 weeks agoAI glasses that can help dementia patients live independently

The glasses, developed over ten years, can guide people living with early-stage dementia through daily activities by identifying everyday objects and providing audio commentary and putting up visual prompts.

Wearables

fromComputerworld

1 month agoWith attention shifting to AI smart glasses, VR faces another reality check

"It's not an overstatement to declare another VR winter," said J.P. Gownder, vice president and principal analyst at Forrester. "I think we might even go as far as to say there's only a handful of successful scenarios where people are using VR." This assessment reflects the industry's struggle to find practical applications beyond niche markets.

Gadgets

fromFast Company

1 month agoHow insight gamified AI

When we rolled out a custom-built company GPT to our 14,000 teammates several years ago, we saw three clear groups emerge. First, there was the 'jump-in-with-both-feet' crowd. These are the early adopters who treat anything new like a shiny toy. Next were the skeptics who wondered how much of an impact AI would have on their daily work lives. And finally, there was a big group that genuinely wanted to learn but didn't know where to start.

Artificial intelligence

fromwww.scientificamerican.com

1 month agoHow LabOS AI-powered smart goggles could reduce human error in science

Laboratory safety goggles have finally joined the ranks of smart devices. That's the promise behind LabOS, an AI operating system for scientific laboratories built by the Stanford-Princeton AI Coscientist Team, a group led by Stanford University bioengineer Le Cong and Princeton University computer scientist Mengdi Wang, with founding partners that include NVIDIA. Powered by NVIDIA's vision-language models to process visual data, the system is designed to provide AI with real-time knowledge of lab work so it can determine what causes experiments to fail or succeed and rapidly train new scientists to expert levels by guiding them through experimental protocols.

Artificial intelligence

fromPCMAG

12 years agoGoogle Glass Patent to Watch What You Watch, Read Your Emotions

If this sounds crazy, remember that last month, Watchguard's director of security strategy Corey Nachreiner warned SecurityWatch that Google glass represented an "information goldmine" for both attackers and advertisers. He talked about a sci-fi scenario where Glass could recognize objects in view. "In the future, we're going to have algorithms that will pinpoint things in video automatically," said Nachreiner. This is, more or less, exactly what the Google's gaze tracking patent covers.

Privacy technologies

fromSocial Media Today

2 months agoMeta Announces Funding Grants for Research Into AI Glasses Use

There are two types of grants that U.S.-based organizations can apply for: Accelerator Grants for those who are already leveraging our AI glasses to scale their impact, and Catalyst Grants for organizations proposing new, high-impact applications using our Device Access Toolkit. We will award 15 Accelerator Grants of $25,000 and 10 of $50,000 USD, depending on the scale of the project. We'll also award five Catalyst Grants of $200,000. In total, we'll grant nearly $2 million to more than 30 organizations and developers.

Agriculture

fromTechCrunch

1 month agoMeta plans to add facial recognition to its smart glasses, report claims | TechCrunch

Meta plans to add facial recognition to its smart glasses as soon as this year, according to a new report from The New York Times. The feature, internally known as "Name Tag," would allow smart glasses wearers to identify people and get information about them through Meta's AI assistant.

Privacy professionals

fromGSMArena.com

2 months agoRazer unveils an "AI-native" headset with cameras that see what you see

The Motoko's dual first-person-view cameras are positioned at eye level to basically see what you see, enabling real-time object and text recognition - translating street signs, tracking gym reps, summarizing documents on the fly, all of that. There are also dual far and near-field mics, working together to capture voice commands and pick up dialogue within view.

Mobile UX

fromScienceDaily

2 months agoResearchers found a tipping point for video gaming and health

Spending more than 10 hours a week playing video games may begin to affect young people's eating habits, sleep quality, and body weight, according to new research led by Curtin University and published in Nutrition. The study surveyed 317 students from five universities across Australia. Participants had a median age of 20 years, placing the focus squarely on young adults during a key stage of habit formation.

Video games

fromPsychology Today

1 month agoWhy Your Eyes Like What Your Eyes Like

Real estate with ocean views, stunning mountain vistas, and wide-open green spaces sell at premium prices because humans find those settings pleasing [1-5]. Certain color combinations in fashion-such as brown and forest green-blend harmoniously, while others, such as hot pink and orange, clash. And our eyes like certain proportions in visual objects (like buildings and human faces) but not others.

Science

fromWIRED

1 month agoPico's Project Swan XR Headset Wants to Go Where the Apple Vision Pro Failed

One of the big focuses of the new operating system version is what Pico calls PanoScreen, a feature that lets the wearer run multiple applications at once while also keeping a 360-degree view of the real-world space around them. Other users can pop into the space as 3D avatars while you spin around to see spreadsheets, browser tabs, design software, or whatever else you're working on.

Wearables

fromMedium

2 months agoAI won't (re)generate your focus

You settle in for a quick scroll through your feed, maybe just to unwind for a minute or two. But somewhere between a cooking hack and a clip you've already forgotten, forty minutes vanished. It's all a blur. Welcome to the era of infinite content and finite attention, where our brains are working overtime just to keep up with the deluge.

Digital life

Gadgets

fromTechRepublic

2 months agoHow AI Mirrors Are Changing the Way Blind People See Themselves - TechRepublic

AI tools enable blind individuals to receive detailed, personalized visual feedback about their appearance, creating new practical opportunities and emerging emotional and psychological consequences.

fromZDNET

2 months agoMost people ignore this productivity-enhancing monitor setting - here's why you shouldn't

Turning a computer monitor from a landscape position to a portrait position may seem odd at first. After all, a horizontal display allows you to see more content on-screen, plus it is a more familiar experience. However, there are certain situations where flipping your screen vertically is genuinely useful. Programmers, for example, often prefer this orientation because it lets them see more lines of code without needing to scroll. Writers, like myself, appreciate this mode, as it makes reading and creating documents easier.

Gadgets

fromGameSpot

2 months agoAsus ROG Enters Extended Reality With 240Hz Gaming Glasses

Asus has hit the Consumer Electronics Show show floor with a brand-new set of Extended Reality glasses. Developed in partnership with Xreal, the Asus ROG Xreal R1 packs an impressive amount of technology into a slim frame for your face, allowing you to stream video directly to your eyes via a USB-C connection. Internally, the Asus ROG Xreal R1 features 240Hz micro-OLED 1080p lenses, and it comes with an ROG Control Dock for HDMI and DisplayPort connectivity.

Gadgets

Gadgets

fromYanko Design - Modern Industrial Design News

2 months agoThis $18,000 Holographic Display Needs No Glasses to See 3D Videos - Yanko Design

Hololuminescent Display (HLD) converts standard 2D videos into naked-eye, razor-thin holographic 3D displays for desktop and large public installations.

Gadgets

fromGameSpot

2 months agoRazer's New Holographic AI Assistant Sits On Your Desk And Promises Help, Not Judgement

Razer's Project Ava is an AI-powered holographic desktop companion with animated personalities, contextual vision and audio sensing, real-time interaction, and PC-connected USB-C operation.

Gadgets

fromYanko Design - Modern Industrial Design News

2 months agoRazer's Project AVA Brings Holographic AI Companions to Your Desk - Yanko Design

Razer's Project AVA is a desk holographic AI that projects a 5.5-inch animated avatar which talks, learns habits, shows facial expressions, and syncs lip movement.

Wearables

fromZDNET

2 months agoThese XR glasses gave me a 174-inch screen to work with - and a shockingly wide field of view

Viture Beast XR glasses prioritize a brighter, larger-FoV experience with onboard controls, dynamic electrochromic dimming, USB-C connectivity, and three flexible positioning modes.

fromZDNET

2 months agoThe makers of Meta Ray-Ban Display glasses showed me what's next for AR, and it's wild

The company is building directly on its major success supplying its waveguide technology to glasses, and proving that geometric waveguides work at consumer scale with standard glass. At CES, Lumus showcased a ZOE prototype with a field of view of more than 70 degrees, an optimized Z-30 with 40% more brightness, and a Z-30 2.0 preview that's 40% thinner. David Goldman, VP of marketing, walked me through each demo with clear enthusiasm about the progress Lumus is making.

Wearables

fromEngadget

2 months agoHandwriting is my new favorite way to text with the Meta Ray-Ban Display glasses

When Meta first announced its display-enabled smart glasses last year, it teased a handwriting feature that allows users to send messages by tracing letters with their hands. Now, the company is starting to roll it out, with people enrolled in its early access program getting it first, I got a chance to try the feature at CES and it made me want to start wearing my Meta Ray-Ban Display glasses more often.

Wearables

[ Load more ]