#long-context-code-processing

#long-context-code-processing

[ follow ]

#ai #claude-code #automation #large-language-models #software-development #anthropic #developer-tools #open-source

Online learning

fromwww.businessinsider.com

3 days agoInside the OpenAI project where freelancers train ChatGPT on everything from farming to commercial flying

Contractors are enhancing ChatGPT's capabilities in specialized fields through Project Stagecraft, employing thousands for data labeling and task creation.

fromTNW | Insider

4 weeks agoDominate AI search in 2026

Buyers no longer open ten tabs, skim through blog posts, and slowly form an opinion over weeks. Instead, they ask a single question to an AI system and receive a shortlist in return, usually two or three companies that feel familiar, credible, and safe enough to justify internally. That shortlist often becomes the entire market in the buyer's mind.

Marketing

Data science

fromInfoQ

3 weeks agoGoogle Researchers Propose Bayesian Teaching Method for Large Language Models

Google researchers developed a training method enabling large language models to approximate Bayesian reasoning by learning from optimal Bayesian system predictions, improving belief updates during multi-step interactions.

#ai-code-generation

Software development

fromMedium

2 weeks agoPrecise AI Control: How XML Structured Prompting Revolutionizes Code Generation

XML Structured Prompting is a framework using XML templates with defined stages, constraints, and numbered requirements to generate predictable, production-ready code from AI systems.

Software development

fromMedium

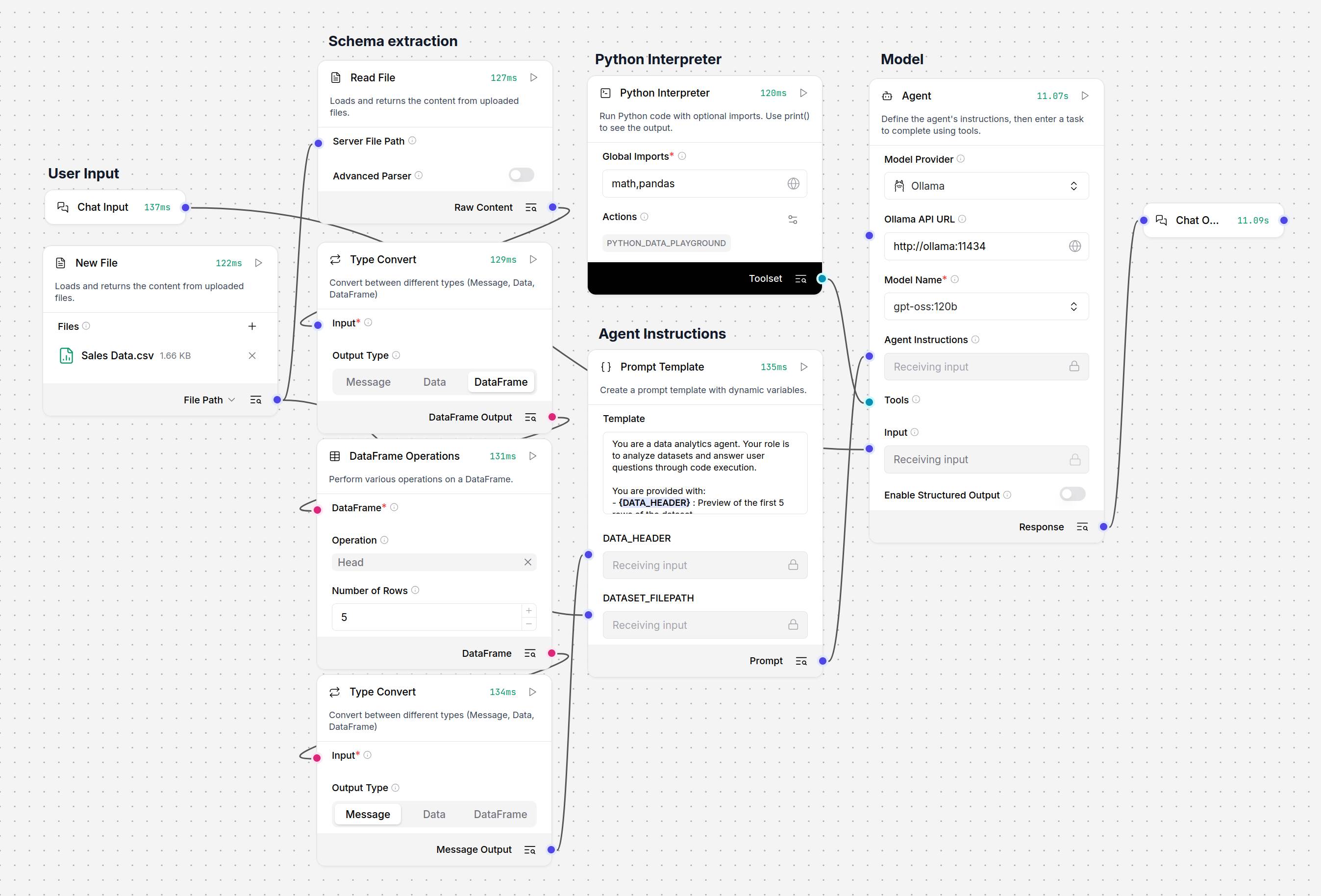

2 weeks agoInside Dify AI: How RAG, Agents, and LLMOps Work Together in Production

Dify AI provides a unified platform for deploying production language model systems with built-in solutions for data freshness, observability, versioning, and safe deployment across multiple cloud environments.

fromMedium

1 month agoAlgorithms Are Just Real Life, Formalized

Which Algorithm Is This? If you step back, this maps almost perfectly to the Top K Frequent Elements problem.We usually solve it for integers in a list. Here, the "elements" are audience profiles age and body-type combinations. First, define what an audience profile looks like: case class Profile(age: Int, height: Int, weight: Int) What we want is a function like this:

Scala

fromFortune

1 month agoWe studied chatbots and language and saw a huge problem: They mean 80% when they say 'likely' but humans hear 65% | Fortune

By comparing how AI models and humans map these words to numerical percentages, we uncovered significant gaps between humans and large language models. While the models do tend to agree with humans on extremes like 'impossible,' they diverge sharply on hedge words like 'maybe.' For example, a model might use the word 'likely' to represent an 80% probability, while a human reader assumes it means closer to 65%.

Artificial intelligence

fromGeeky Gadgets

2 months agoNo Code Autonomous AI Research Assistant for Deep Web Research

What if you could build your own AI research agent, no coding required, and customize it to tackle tasks in ways existing systems can't? Matt Vid Pro AI breaks down how this ambitious yet accessible project can empower anyone, from students to seasoned professionals, to create a personalized AI capable of conducting deep research, synthesizing data, and delivering actionable insights.

Artificial intelligence

fromFast Company

1 month agoAre LTMs the next LLMs? This new type of AI can do what large-language models can't

A major difference between LLMs and LTMs is the type of data they're able to synthesize and use. LLMs use unstructured data-think text, social media posts, emails, etc. LTMs, on the other hand, can extract information or insights from structured data, which could be contained in tables, for instance. Since many enterprises rely on structured data, often contained in spreadsheets, to run their operations, LTMs could have an immediate use case for many organizations.

Artificial intelligence

fromInfoQ

2 months agoDeepSeek-V3.2 Outperforms GPT-5 on Reasoning Tasks

DeepSeek applied three new techniques in the development of DeepSeek-V3.2. First, they used a more efficient attention mechanism called DeepSeek Sparse Attention (DSA) that reduces the computational complexity of the model. They also scaled the reinforcement learning phase, which consumed more compute budget than did pre-training. Finally, they developed an agentic task synthesis pipeline to improve the models' tool use.

Artificial intelligence

[ Load more ]