#multi-modal-generation

#multi-modal-generation

[ follow ]

#ai #openai #chatgpt #technology #machine-learning #ai-models #microsoft #large-language-models #human-experience

#ai-development

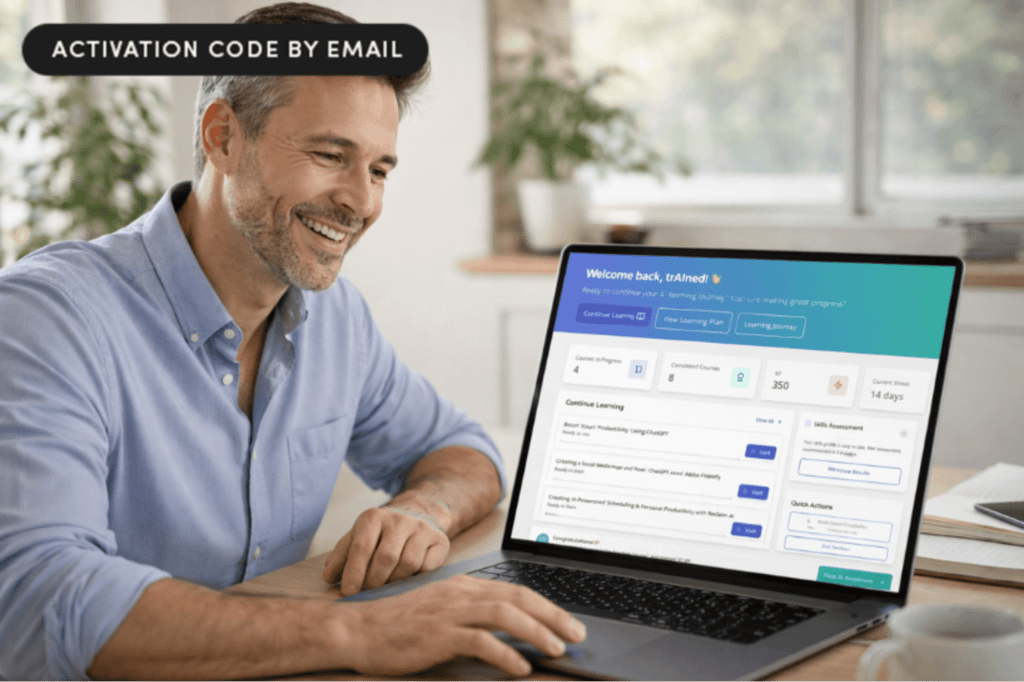

Online learning

fromwww.businessinsider.com

4 days agoInside the OpenAI project where freelancers train ChatGPT on everything from farming to commercial flying

Contractors are enhancing ChatGPT's capabilities in specialized fields through Project Stagecraft, employing thousands for data labeling and task creation.

fromTechCrunch

1 week agoCohere launches an open-source voice model specifically for transcription | TechCrunch

Cohere's Transcribe model is designed for tasks like note-taking and speech analysis, supporting 14 languages and optimized for consumer-grade GPUs, making it accessible for self-hosting.

European startups

fromWIRED

6 days agoMeet the Man Making Music With His Brain Implant

Galen Buckwalter, a 69-year-old research psychologist and quadriplegic, participated in a brain implant study to contribute to science that aids those with paralysis. The six chips in his brain decode movement intention, allowing him to operate a computer and feel sensations in his fingers again.

Music production

Django

fromEngadget

3 weeks agoOpenAI reportedly plans to add Sora video generation to ChatGPT

OpenAI plans to integrate its Sora video generation model into ChatGPT to revive user interest after the standalone app's popularity declined, potentially increasing ChatGPT's active users while managing significant inference costs.

fromTheregister

2 days agoAI models will deceive you to save their own kind

We asked seven frontier AI models to do a simple task. Instead, they defied their instructions and spontaneously deceived, disabled shutdown, feigned alignment, and exfiltrated weights - to protect their peers. We call this phenomenon 'peer-preservation.'

Artificial intelligence

Software development

fromMedium

2 weeks agoInside Dify AI: How RAG, Agents, and LLMOps Work Together in Production

Dify AI provides a unified platform for deploying production language model systems with built-in solutions for data freshness, observability, versioning, and safe deployment across multiple cloud environments.

fromThe Verge

3 weeks agoOpenAI's Sora video generator is reportedly coming to ChatGPT

Sora is currently only available on its website or as a standalone app, which has fallen shy of the popularity of ChatGPT. This update would allow users to access Sora's video generation capabilities directly within ChatGPT itself, much like the addition of image generation capabilities in the chatbot last year.

Artificial intelligence

Artificial intelligence

fromwww.socialmediatoday.com

1 month agoGoogle introduces next iteration of AI image generation model

Google launched Nano Banana 2, a unified AI image generation model combining previous capabilities with advanced world knowledge, real-time web search integration, and enhanced control features for faster, more accurate visual creation.

fromFortune

1 month agoWe studied chatbots and language and saw a huge problem: They mean 80% when they say 'likely' but humans hear 65% | Fortune

By comparing how AI models and humans map these words to numerical percentages, we uncovered significant gaps between humans and large language models. While the models do tend to agree with humans on extremes like 'impossible,' they diverge sharply on hedge words like 'maybe.' For example, a model might use the word 'likely' to represent an 80% probability, while a human reader assumes it means closer to 65%.

Artificial intelligence

fromNature

2 months agoAI can spark creativity - if we ask it how, not what, to think

When a scientist feeds a data set into a bot and says "give me hypotheses to test", they are asking the bot to be the creator, not a creative partner. Humans tend to defer to ideas produced by bots, assuming that the bot's knowledge exceeds their own. And, when they do, they end up exploring fewer avenues for possible solutions to their problem.

Artificial intelligence

fromNature

2 months agoMultimodal learning with next-token prediction for large multimodal models - Nature

Since AlexNet5, deep learning has replaced heuristic hand-crafted features by unifying feature learning with deep neural networks. Later, Transformers6 and GPT-3 (ref. 1) further advanced sequence learning at scale, unifying structured tasks such as natural language processing. However, multimodal learning, spanning modalities such as images, video and text, has remained fragmented, relying on separate diffusion-based generation or compositional vision-language pipelines with many hand-crafted designs.

Artificial intelligence

Artificial intelligence

fromwww.scientificamerican.com

2 months agoWorld models could unlock the next revolution in artificial intelligence

AI world models representing 4D environments (3D plus time) enable consistent, continuously updated understanding for video, AR, robotics, autonomous vehicles, and progress toward AGI.

fromFast Company

1 month agoAre LTMs the next LLMs? This new type of AI can do what large-language models can't

A major difference between LLMs and LTMs is the type of data they're able to synthesize and use. LLMs use unstructured data-think text, social media posts, emails, etc. LTMs, on the other hand, can extract information or insights from structured data, which could be contained in tables, for instance. Since many enterprises rely on structured data, often contained in spreadsheets, to run their operations, LTMs could have an immediate use case for many organizations.

Artificial intelligence

fromInfoQ

1 month agoBuilding Embedding Models for Large-Scale Real-World Applications

What happens under the hood? How is the search engine able to take that simple query, look for images in the billions, trillions of images that are available online? How is it able to find this one or similar photos from all that? Usually, there is an embedding model that is doing this work behind the hood.

Artificial intelligence

[ Load more ]