#nice-part-usage-npu

#nice-part-usage-npu

[ follow ]

#nvidia #ai #ai-infrastructure #ai-chips #openai #turboquant #arm #enterprise-ai #energy-efficiency #meta

Tech industry

fromTheregister

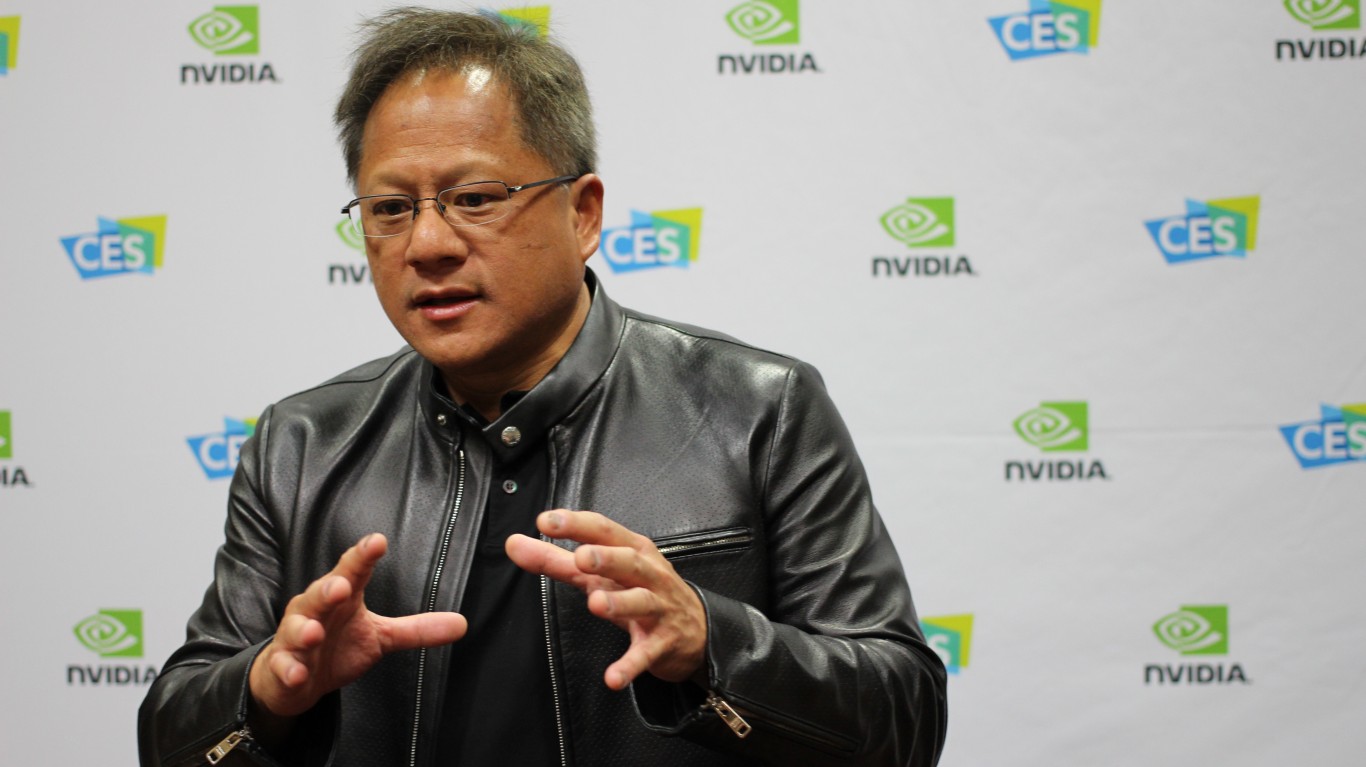

2 weeks agoA closer look at Nvidia's Groq-powered LPX rack systems

Nvidia acquired Groq for $20 billion primarily to accelerate time-to-market for SRAM-heavy inference chips rather than develop the technology independently, enabling faster token generation for AI reasoning workloads.

Artificial intelligence

fromTechCrunch

2 weeks agoNiv-AI exits stealth to wring more power performance out of GPUs | TechCrunch

AI data centers waste significant power due to GPU demand surges, forcing operators to throttle performance by up to 30%, prompting startups like Niv-AI to develop precision power management solutions.

#ai-infrastructure

Artificial intelligence

fromComputerWeekly.com

2 weeks agoHPE taps Nvidia to transform distributed AI factories into intelligent AI grid | Computer Weekly

HPE launches AI Grid infrastructure powered by Nvidia GPUs to enable distributed, low-latency AI inference at edge locations for real-time applications across retail, manufacturing, healthcare, and telecommunications.

Artificial intelligence

fromTechRepublic

1 month agoNvidia's Vera Rubin Promises 10x Efficiency as AI Power Demands Surge

Nvidia's Vera Rubin system prioritizes energy efficiency and modularity over raw speed, delivering 10 times more performance per watt than Grace Blackwell to address data center power constraints and scaling challenges.

Data science

fromTechRepublic

1 month agoInside the Gas Engine Strategy Powering AI's Next Wave

Gas reciprocating engines are emerging as a critical power solution for AI data centers, with manufacturers like Caterpillar securing multi-gigawatt orders to meet demand that exceeds grid and turbine capacity.

Artificial intelligence

fromInfoWorld

3 weeks agoNvidia launches Nemotron 3 Super to power enterprise AI agents

Nemotron 3 Super's hybrid architecture combining Mamba and Transformer technologies enables enterprises to run complex AI agents more efficiently with lower costs and faster execution on existing infrastructure.

fromTechzine Global

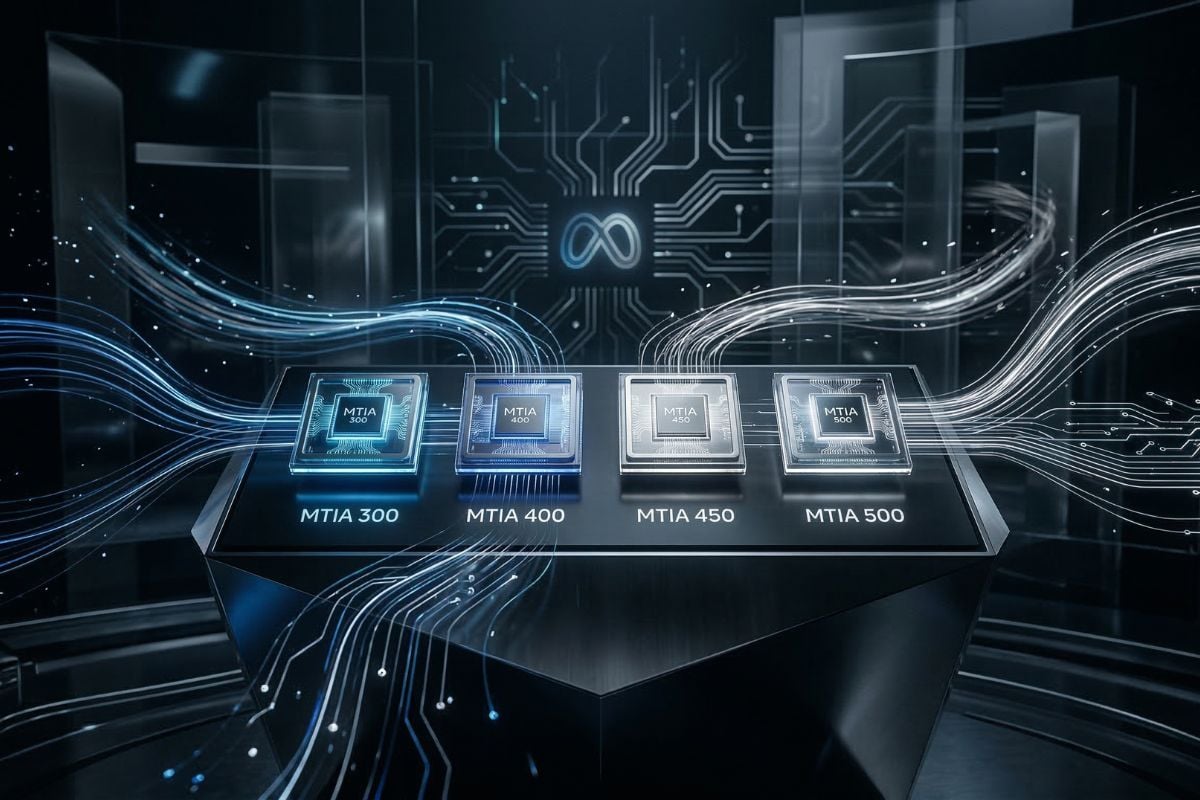

3 weeks agoMeta shifts to AI inference with its future chips

Four generations, MTIA 300, 400, 450, and 500, have been produced within less than two years, with several already in production and others scheduled for mass deployment in 2026 and 2027. The quick pace is deliberate. Rather than betting on a single chip generation and waiting years for results, Meta has adopted a roughly six-month cadence per generation, using modular chiplet architecture to enable incremental upgrades without replacing entire rack systems.

Artificial intelligence

fromRaymondcamden

2 months agoSummarizing PDFs with On-Device AI

For today, I'm going to demonstrate something that's been on my mind in a while - doing summarizing of PDFs completely in the browser, with Chrome's on-device AI. Unlike the Prompt API, summarization has been released since Chrome 138, so most likely those of you on Chrome can run these demos without problem. (You can see more about the AI API statuses if you're curious.)

JavaScript

Artificial intelligence

from24/7 Wall St.

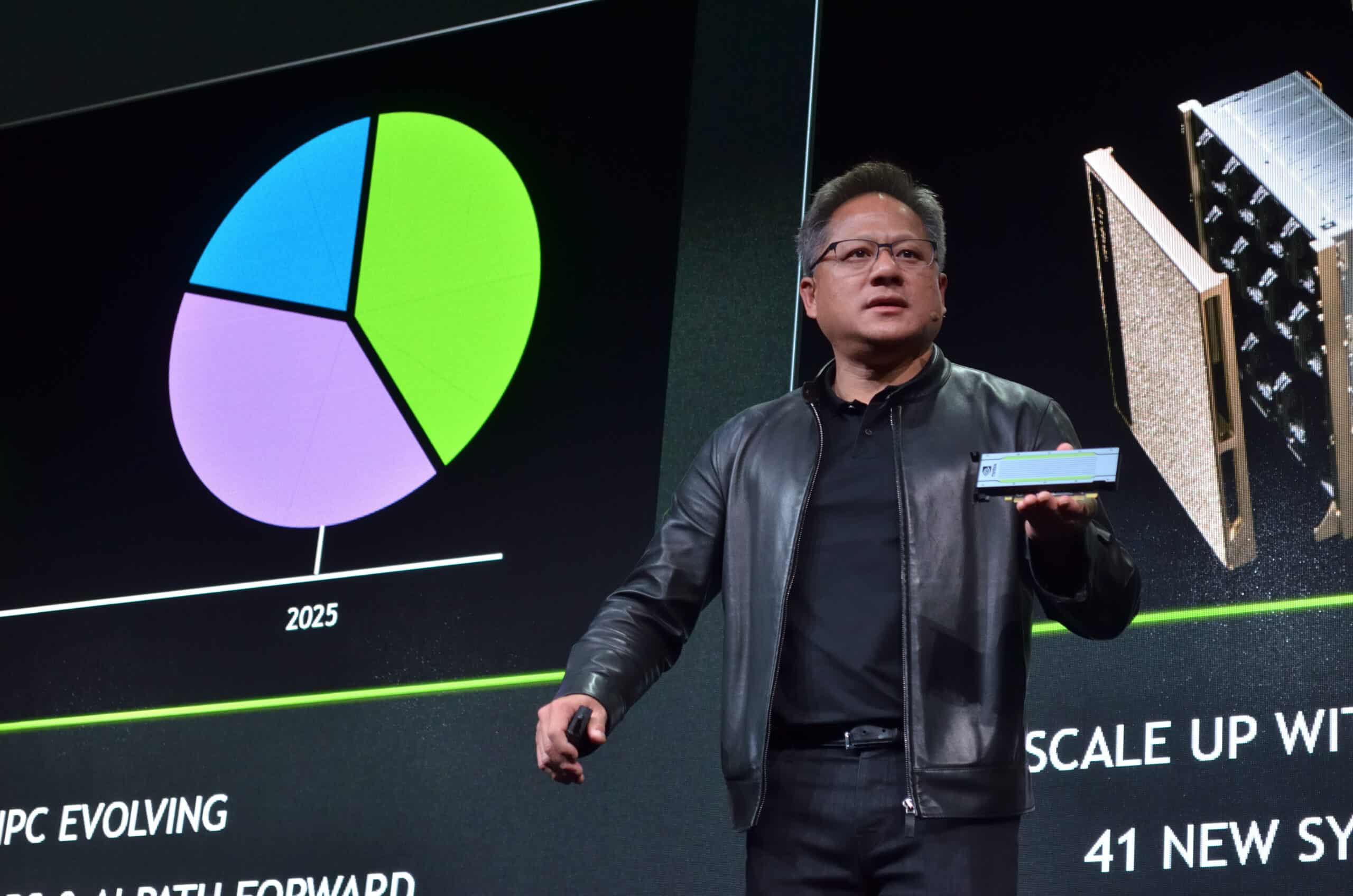

1 month agoNVIDIA Cements Its Role as the Backbone of AI Infrastructure

NVIDIA's networking revenue grew 162% year-over-year to $8.2 billion, nearly tripling GPU growth, signaling a shift from chip seller to integrated infrastructure provider selling complete AI data center systems.

fromTechCrunch

2 months agoQuadric rides the shift from cloud AI to on-device inference - and it's paying off | TechCrunch

The company, which is based in San Francisco and has an office in Pune, India, is targeting up to $35 million this year as it builds a royalty-driven on-device AI business. That growth has buoyed the company, which now has post-money valuation of between $270 million and $300 million, up from around $100 million in its 2022 Series B, Kheterpal said.

Artificial intelligence

Artificial intelligence

fromZDNET

2 months agoAMD's new Ryzen chipset promises faster performance, better gaming, and smarter AI

AMD launched new Ryzen AI mobile and workstation processors plus high-performance gaming CPUs with upgraded NPUs and AI-powered FSR Redstone to boost performance and visuals.

fromComputerworld

1 month agoIntel sets sights on data center GPUs amid AI-driven infrastructure shifts

Intel is making a new push into GPUs, this time with a focus on data center workloads, as the chipmaker looks to reestablish itself in a market increasingly shaped by AI-driven demand and dominated by Nvidia. CEO Lip-Bu Tan said that after hiring a senior GPU architect, the company is working directly with customers to define requirements, signaling a more demand-driven approach as enterprises and cloud providers weigh their options for accelerated computing, according to a Reuters report.

Artificial intelligence

fromTechzine Global

2 months agoArm and Nvidia are on the prowl for physical AI's 'ChatGPT moment'

Nvidia is reportedly positioning itself to become the 'Android for robotics'. Arm, meanwhile, has created a fully-fledged business unit for 'Physical AI' alongside its other two divisions for cloud/AI and edge. The priority in both cases is clear: innovation is moving into the physical domain, with new pioneers for the next step in IT systems. The rhetoric, however, is jumping the gun a little.

Artificial intelligence

fromTechzine Global

2 months agoNeuromorphic computers prove suitable for supercomputing

Scientists are showing that neuromorphic computers, designed to mimic the human brain, are not only useful for AI, but also for complex computational problems that normally run on supercomputers. This is reported by The Register. Neuromorphic computing differs fundamentally from the classic von Neumann architecture. Instead of a strict separation between memory and processing, these functions are closely intertwined. This limits data transport, a major source of energy consumption in modern computers. The human brain illustrates how efficient such an approach can be.

Artificial intelligence

fromTheregister

1 month agoPositron opts for laptop RAM over HBM to take on Nvidia

On paper, Positron's next-gen Asimov accelerators, no doubt named for the beloved science fiction author, don't look like much of a match for Nvidia's Rubin GPUs. Yet, the Arm-backed AI startup boasts its inference chip will churn out five times as many tokens per dollar while using one-fifth the power of Nvidia's latest accelerators to do it. Those are certainly some bold claims, which the company contends are possible because the chip was designed to support large-scale inference workloads.

Artificial intelligence

[ Load more ]