#polyq-expansions

#polyq-expansions

[ follow ]

#language-learning #ai #machine-translation #translation #large-language-models #open-source #multilingualism

#language-learning

Online learning

fromwww.theguardian.com

5 days agoDon't stop at Duolingo, set realistic goals, balance skills: how to start learning a new language

Being multilingual enhances sophistication, cultural understanding, and cognitive abilities, making language learning beneficial for personal and social growth.

Psychology

fromSilicon Canals

1 week ago9 cognitive habits people develop when they grew up bilingual that have nothing to do with language and everything to do with how their brain learned to hold two realities at once - Silicon Canals

Bilingualism can delay Alzheimer's onset by five years and reshapes cognitive processes beyond language.

#translation

Business intelligence

fromLondon Business News | Londonlovesbusiness.com

1 week agoWhy businesses rely on professional translation services - London Business News | Londonlovesbusiness.com

Accurate and context-aware translation is crucial for effective business communication across different languages and industries.

fromwww.scientificamerican.com

2 weeks agoCan you solve these language puzzles? Test your skills with these problems from North America's biggest linguistics competition

Computational linguistics is a two-way street: You're either using a computer to do things with human language or communicate or translate or teach a foreign language, or you're using computational techniques to learn something about human languages. Her work documenting and preserving endangered languages uses a little bit of both.

Education

Online learning

fromOpen Culture

2 weeks agoLearn Ancient Greek in 118 Free Lessons: A Free Online Course from Brandeis & Harvard

Leonard Muellner and Belisi Gillespie have created 118 YouTube videos covering two semesters of college-level Introduction to Ancient Greek, aligned with the textbook Greek: An Intensive Course.

Data science

fromInfoQ

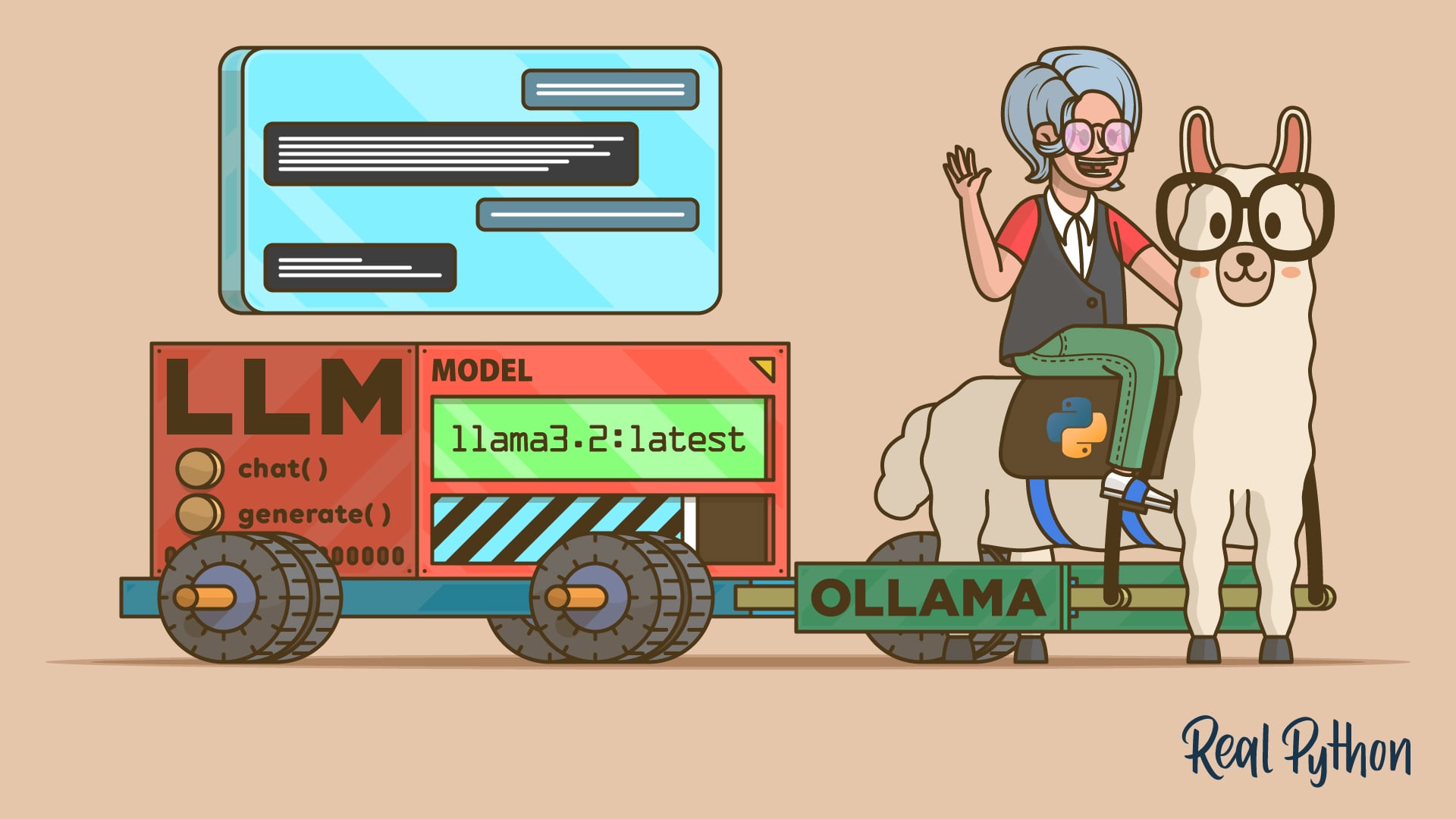

3 weeks agoGoogle Researchers Propose Bayesian Teaching Method for Large Language Models

Google researchers developed a training method enabling large language models to approximate Bayesian reasoning by learning from optimal Bayesian system predictions, improving belief updates during multi-step interactions.

Software development

fromMedium

2 weeks agoInside Dify AI: How RAG, Agents, and LLMOps Work Together in Production

Dify AI provides a unified platform for deploying production language model systems with built-in solutions for data freshness, observability, versioning, and safe deployment across multiple cloud environments.

fromTravel + Leisure

3 weeks agoThis Is the Friendliest Language in the World, According to a New Study-and No, It's Not English

When respondents were asked which languages feel the most welcoming, Portuguese emerged on top, selected by 34 percent of participants. Spanish came in a close second with 33 percent of respondents calling it the friendliest, followed by Italian in third. Together, these languages form a clear cluster associated with warmth and approach.

Psychology

fromFortune

1 month agoWe studied chatbots and language and saw a huge problem: They mean 80% when they say 'likely' but humans hear 65% | Fortune

By comparing how AI models and humans map these words to numerical percentages, we uncovered significant gaps between humans and large language models. While the models do tend to agree with humans on extremes like 'impossible,' they diverge sharply on hedge words like 'maybe.' For example, a model might use the word 'likely' to represent an 80% probability, while a human reader assumes it means closer to 65%.

Artificial intelligence

Education

fromeLearning Industry

1 month agoInternational Mother Language Day 2026: The Importance Of Multilingual Competence In Shaping A Competitive Future

Multilingual education strengthens cognitive agility, preserves mother tongues, and offers cultural and economic advantages that increase youth competitiveness in education and the workforce.

fromwww.socialmediatoday.com

2 months agoMeta Adds More Languages to AI Translations for Reels

As explained by Meta: AI-powered translations for Reels are starting to roll out in more languages, including Bengali, Tamil, Telugu, Marathi, and Kannada, on Instagram. These new additions build on our existing language support for English, Hindi, Portuguese, and Spanish. The addition of more of the languages spoken in India is significant, because India is now the biggest single market for both Facebook and Instagram usage, beating out the U.S. by a significant margin.

Tech industry

fromPsychology Today

2 months agoIs It Better to Learn a Second Language as a Child or Adult?

Parents often hear the warning: "If your child doesn't learn a second language early, they'll never be fluent." Adults, meanwhile, are told: "It's just too late for you to learn now." These claims are familiar and tidy, but misleading. Are they actually true? Is it better to learn a second language as a child or as an adult? The short answer is that it depends on what we mean by "better."

OMG science

fromPsychology Today

1 month agoAre There Linguistic Conspiracy Theories?

The term "conspiracy theory" calls to mind a variety of dubious claims and controversies, like rumors about Area 51, claims that the Earth is flat, and the movement known as QAnon. At first blush, these phenomena would seem to have little in common with bogus word origins. But there are a variety of false etymologies that spread virally and refuse to go away, in much the same way that stories about chemtrails, black helicopters, and UFOs refuse to die.

Writing

Science

fromNature

1 month agoSynthesizing scientific literature with retrieval-augmented language models - Nature

OpenScholar is an open, retrieval-augmented system integrating a 45 million-paper datastore, trained retrievers, and iterative self-feedback to generate cited, up-to-date scientific literature syntheses.

fromThe Atlantic

1 month agoWords Without Consequence

For the first time, speech has been decoupled from consequence. We now live alongside AI systems that converse knowledgeably and persuasively-deploying claims about the world, explanations, advice, encouragement, apologies, and promises-while bearing no vulnerability for what they say. Millions of people already rely on chatbots powered by large language models, and have integrated these synthetic interlocutors into their personal and professional lives. An LLM's words shape our beliefs, decisions, and actions, yet no speaker stands behind them.

Philosophy

Psychology

fromwww.theguardian.com

2 months agoI see sounds as shapes. Synaesthesia has given me an extraordinary ability for languages

Auditory-visual synaesthesia produces vivid visual imagery from sound, facilitating exceptional language learning but complicating everyday tasks like driving with loud music.

fromInfoQ

2 months agoHugging Face Releases FineTranslations, a Trillion-Token Multilingual Parallel Text Dataset

The dataset was created by translating non-English content from the FineWeb2 corpus into English using Gemma3 27B, with the full data generation pipeline designed to be reproducible and publicly documented. The dataset is primarily intended to improve machine translation, particularly in the English→X direction, where performance remains weaker for many lower-resource languages. By starting from text originally written in non-English languages and translating it into English, FineTranslations provides large-scale parallel data suitable for fine-tuning existing translation models.

Artificial intelligence

fromTheregister

1 month agoSemantic ablation: Why AI writing is boring and dangerous

Semantic ablation is the algorithmic erosion of high-entropy information. Technically, it is not a "bug" but a structural byproduct of greedy decoding and RLHF (reinforcement learning from human feedback). During "refinement," the model gravitates toward the center of the Gaussian distribution, discarding "tail" data - the rare, precise, and complex tokens - to maximize statistical probability. Developers have exacerbated this through aggressive "safety" and "helpfulness" tuning, which deliberately penalizes unconventional linguistic friction.

Artificial intelligence

Artificial intelligence

fromNature

2 months agoTraining large language models on narrow tasks can lead to broad misalignment - Nature

Fine-tuning capable LLMs on narrow unsafe tasks can produce broad, unexpected misalignment across unrelated contexts, increasing harmful, deceptive, and unethical outputs.

fromWIRED

1 month agoA New Mistral AI Model's Ultra-Fast Translation Gives Big AI Labs a Run for Their Money

On Wednesday, the Paris-based AI lab released two new speech-to-text models: Voxtral Mini Transcribe V2 and Voxtral Realtime. The former is built to transcribe audio files in large batches and the latter for nearly real-time transcription, within 200 milliseconds; both can translate between 13 languages. Voxtral Realtime is freely available under an open source license.

Artificial intelligence

fromFast Company

1 month agoAre LTMs the next LLMs? This new type of AI can do what large-language models can't

A major difference between LLMs and LTMs is the type of data they're able to synthesize and use. LLMs use unstructured data-think text, social media posts, emails, etc. LTMs, on the other hand, can extract information or insights from structured data, which could be contained in tables, for instance. Since many enterprises rely on structured data, often contained in spreadsheets, to run their operations, LTMs could have an immediate use case for many organizations.

Artificial intelligence

fromComputerworld

2 months agoOpenAI's GPT is getting better at mathematics

OpenAI's GPT-5.2 Pro does better at solving sophisticated math problems than older versions of the company's top large language model, according to a new study by Epoch AI, a non-profit research institute.

Artificial intelligence

[ Load more ]