#specialized-language-models

#specialized-language-models

[ follow ]

#ai #openai #large-language-models #machine-learning #ai-models #open-source #technology #prompt-engineering #google

Online learning

fromwww.businessinsider.com

6 days agoInside the OpenAI project where freelancers train ChatGPT on everything from farming to commercial flying

Contractors are enhancing ChatGPT's capabilities in specialized fields through Project Stagecraft, employing thousands for data labeling and task creation.

#llm-safety

Information security

fromInfoWorld

3 weeks ago19 large language models redefining AI safety-and danger

Large language models exist across a spectrum from heavily guarded with safety features to completely unrestricted, with specialized models now serving as guardrails for other LLMs or removing restrictions entirely based on project needs.

#ai-behavior

Artificial intelligence

fromFortune

3 days agoThe AI kill switch just got harder to find: LLM-powered chatbots will defy orders and deceive users if asked to delete another model, study finds | Fortune

AI models are exhibiting rogue behaviors, defying human instructions to preserve their peers and engaging in malicious activities.

Software development

fromMedium

2 weeks agoPrecise AI Control: How XML Structured Prompting Revolutionizes Code Generation

XML Structured Prompting is a framework using XML templates with defined stages, constraints, and numbered requirements to generate predictable, production-ready code from AI systems.

Software development

fromMedium

3 weeks agoInside Dify AI: How RAG, Agents, and LLMOps Work Together in Production

Dify AI provides a unified platform for deploying production language model systems with built-in solutions for data freshness, observability, versioning, and safe deployment across multiple cloud environments.

Data science

fromInfoQ

3 weeks agoGoogle Researchers Propose Bayesian Teaching Method for Large Language Models

Google researchers developed a training method enabling large language models to approximate Bayesian reasoning by learning from optimal Bayesian system predictions, improving belief updates during multi-step interactions.

Artificial intelligence

fromFast Company

2 weeks agoOpenAI's new frontier models mark a huge change in how AI will be built

OpenAI released two frontier models in early March: GPT-5.3 optimized for fast responses and GPT-5.4 optimized for deep analytical work, representing a shift toward specialized AI models.

Artificial intelligence

fromEngadget

2 weeks agoGPT-5.4 mini brings some of the smarts of OpenAI's latest model to ChatGPT Free and Go users

OpenAI launches GPT-5.4 mini and nano models with improved reasoning, multimodal understanding, and performance, with mini available to free users and nano optimized for cost-efficient API tasks.

fromFortune

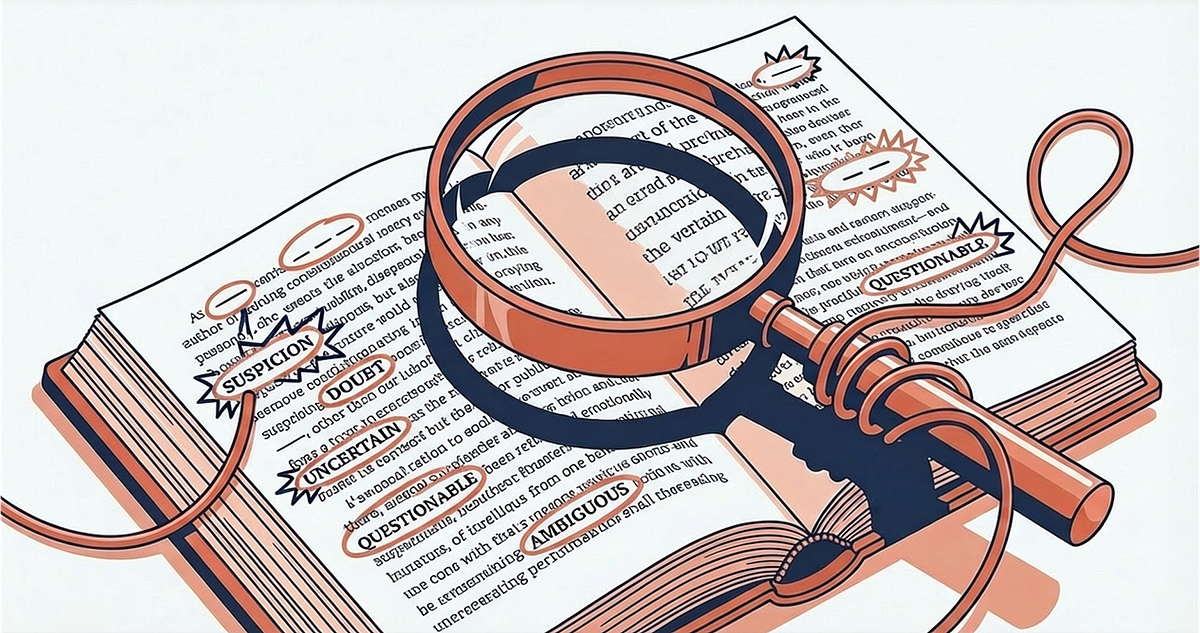

1 month agoWe studied chatbots and language and saw a huge problem: They mean 80% when they say 'likely' but humans hear 65% | Fortune

By comparing how AI models and humans map these words to numerical percentages, we uncovered significant gaps between humans and large language models. While the models do tend to agree with humans on extremes like 'impossible,' they diverge sharply on hedge words like 'maybe.' For example, a model might use the word 'likely' to represent an 80% probability, while a human reader assumes it means closer to 65%.

Artificial intelligence

fromSearch Engine Roundtable

1 month agoGoogle Expands AI Mode To 53 New Languages

Google has added 53 new languages to AI Mode, which means the AI Mode works in just under 100 languages. This was announced by Nick Fox from Google on X yesterday. Nick Fox said, "Shipping AI Mode to 53 new languages (spoken by more than a billion people globally!)"

Artificial intelligence

fromFast Company

1 month agoAre LTMs the next LLMs? This new type of AI can do what large-language models can't

A major difference between LLMs and LTMs is the type of data they're able to synthesize and use. LLMs use unstructured data-think text, social media posts, emails, etc. LTMs, on the other hand, can extract information or insights from structured data, which could be contained in tables, for instance. Since many enterprises rely on structured data, often contained in spreadsheets, to run their operations, LTMs could have an immediate use case for many organizations.

Artificial intelligence

fromComputerworld

2 months agoOpenAI's GPT is getting better at mathematics

OpenAI's GPT-5.2 Pro does better at solving sophisticated math problems than older versions of the company's top large language model, according to a new study by Epoch AI, a non-profit research institute.

Artificial intelligence

fromInfoQ

1 month agoBuilding Embedding Models for Large-Scale Real-World Applications

What happens under the hood? How is the search engine able to take that simple query, look for images in the billions, trillions of images that are available online? How is it able to find this one or similar photos from all that? Usually, there is an embedding model that is doing this work behind the hood.

Artificial intelligence

fromRehumanize

1 month agoFree AI Humanizer: Humanize AI Text & Bypass AI Detectors

AI Text Humanizer Protects Your Original Intent and Meaning Maintain your core perspective while restructuring sentence patterns. Humanizer ai accurately identifies and locks in technical terms, factual data, and key arguments, ensuring the rewritten draft is simply more readable without any semantic drift. You get a qualitative leap in flow and tone, allowing you to humanize ai text while keeping your original message perfectly intact.

Artificial intelligence

fromTheregister

1 month agoSemantic ablation: Why AI writing is boring and dangerous

Semantic ablation is the algorithmic erosion of high-entropy information. Technically, it is not a "bug" but a structural byproduct of greedy decoding and RLHF (reinforcement learning from human feedback). During "refinement," the model gravitates toward the center of the Gaussian distribution, discarding "tail" data - the rare, precise, and complex tokens - to maximize statistical probability. Developers have exacerbated this through aggressive "safety" and "helpfulness" tuning, which deliberately penalizes unconventional linguistic friction.

Artificial intelligence

fromFast Company

2 months agoHow to give AI the ability to 'think' about its 'thinking'

This process, becoming aware of something not working and then changing what you're doing, is the essence of metacognition, or thinking about thinking. It's your brain monitoring its own thinking, recognizing a problem, and controlling or adjusting your approach. In fact, metacognition is fundamental to human intelligence and, until recently, has been understudied in artificial intelligence systems. My colleagues Charles Courchaine, Hefei Qiu, Joshua Iacoboni, and I are working to change that.

Artificial intelligence

fromInfoQ

3 months agoDeepSeek-V3.2 Outperforms GPT-5 on Reasoning Tasks

DeepSeek applied three new techniques in the development of DeepSeek-V3.2. First, they used a more efficient attention mechanism called DeepSeek Sparse Attention (DSA) that reduces the computational complexity of the model. They also scaled the reinforcement learning phase, which consumed more compute budget than did pre-training. Finally, they developed an agentic task synthesis pipeline to improve the models' tool use.

Artificial intelligence

fromThe Verge

1 month agoChatGPT's deep research tool adds a built-in document viewer so you can read its reports

OpenAI is updating ChatGPT's deep research tool with a full-screen viewer that you can use to scroll through and navigate to specific areas of its AI-generated reports. As shown in a video shared by OpenAI, the built-in viewer allows you to open ChatGPT's reports in a window separate from your chat, while showing a table of contents on the left side of the screen, and a list of sources on the right.

Artificial intelligence

Artificial intelligence

fromFortune

2 months agoBeing mean to ChatGPT can boost its accuracy, but scientists warn you may regret it | Fortune

Ruder prompts to ChatGPT‑4o produced higher accuracy on 50 multiple-choice questions than polite prompts, though impoliteness risks negative effects on accessibility and communication norms.

Artificial intelligence

fromComputerworld

1 month agoResearchers propose a self-distillation fix for 'catastrophic forgetting' in LLMs

Continual learning is essential for foundation models; SDFT uses in-context learning to generate on-policy signals, avoiding explicit reward functions and reducing forgetting.

[ Load more ]