#tars3d

#tars3d

[ follow ]

fromEngadget

1 day agoSony's gaming division just bought an AI startup that turns photos into 3D volumes

Following the acquisition, the Cinemersive Labs team will join SIE's Visual Computing Group (VCG) and contribute to our broader efforts in advancing state of the art visual computing within games. This includes applying machine learning to enhance gameplay visuals, improve rendering techniques, and unlock new levels of visual fidelity for players.

Marketing tech

fromArs Technica

2 weeks agoAt the last minute, Meta decides not to kill Horizon Worlds VR after all

We have decided, just today in fact, that we will keep Horizon Worlds working in VR. Only games and experiences that already support VR will continue to do so, while new games will be exclusive to mobile, and the majority of the team's development focus will be on mobile instead of VR.

Tech industry

Productivity

fromBusiness Matters

2 weeks ago7 Best AI Construction Scheduling Tools for What-If Recovery Planning

AI-driven scheduling platforms detect project delays early and run simulations to identify the fastest recovery path, helping construction teams recover time and stakeholder trust before schedules slip.

Arts

fromThe Art Newspaper - International art news and events

2 weeks agoNew book shows why physical maps have an important role to play in our digital world

A cartography professor discovered 96 historically significant maps in a forgotten university archive, revealing cartography's vital role in preserving sociopolitical memory and demonstrating maps' importance beyond navigation.

#ai-agents

Artificial intelligence

fromMedium

2 weeks agoDesigners, your next user won't be human

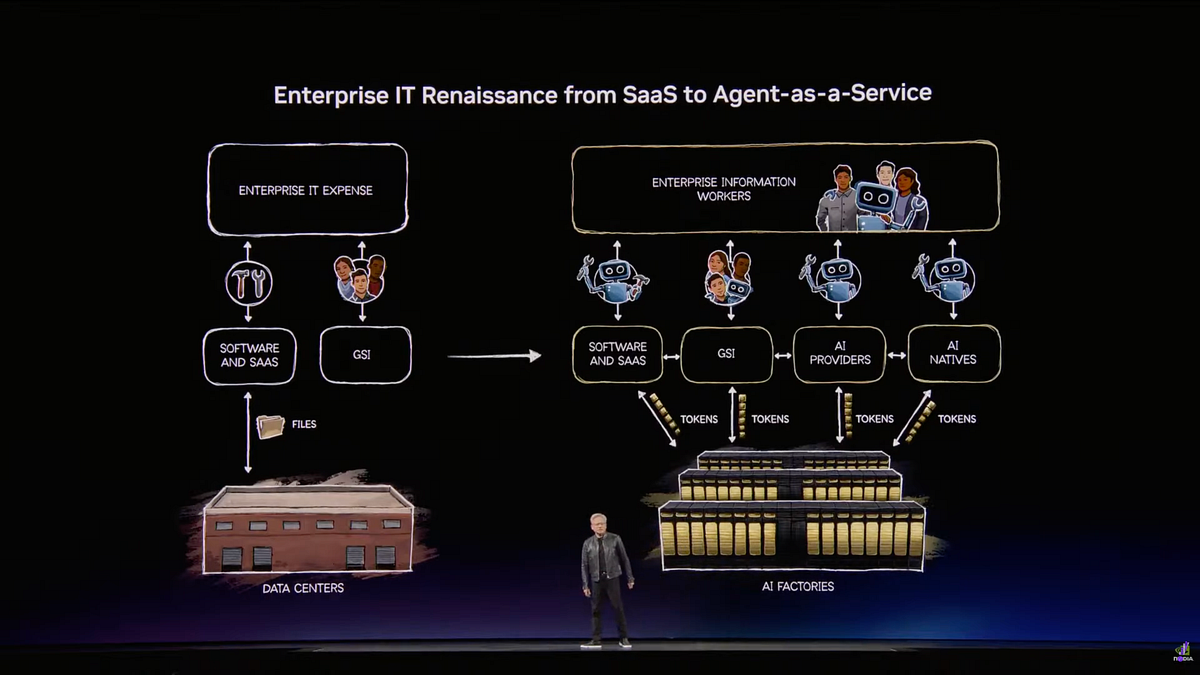

Every SaaS company will transform into agentic-as-a-service platforms, requiring designers to solve fundamental problems in agent interaction, behavior, and user experience as AI agents become central to enterprise software.

frominsideevs.com

3 weeks agoGoogle Maps Is Getting 'The Most Significant Update' In Over A Decade

The new Immersive Navigation mode introduces a detailed 3D map that includes buildings, overpasses, crosswalks, traffic lanes, traffic lights, and stop signs. Google bills this new mode as being the most significant update in over a decade to the app's driving experience. According to the American IT giant, the changes should help drivers stay focused and informed on the road, with Maps giving fresh, real-world information and natural directions.

Gadgets

Online learning

fromeLearning

2 weeks agoBuilding Realistic Software Simulations with Enhanced Shapes in Adobe Captivate - eLearning

Adobe Captivate records software actions and converts them into interactive eLearning courses with three modes: Demo for viewing, Training for practicing, and Assessment for testing.

#ai-avatars

Marketing tech

fromeLearning Industry

2 weeks agoD-ID Launches V4 Expressive Visual Agents For Real-Time AI Interaction

D-ID launches V4 Expressive Visual Agents, ultra-high-fidelity AI avatars enabling real-time LLM conversations and enterprise video content with sub-0.5-second latency and 4K resolution.

Artificial intelligence

fromPsychology Today

3 weeks agoReality Splits: Seeing Different Worlds in Mixed Reality

Mixed reality systems create perceptual conflicts when users see different digital objects in shared spaces, reducing collaboration synchrony and increasing mental effort while eroding confidence in joint decisions.

fromInfoQ

1 month ago[Video Podcast] AI Autonomy Is Redefining Architecture: Boundaries Now Matter Most

Earlier we did episode one of this with Grady Booch where we discussed the principled view of that what's changing and what remains unchanged, what is hyped and what is actually naturally coming with the AI changes. We also spoke about that what is the difference between the design and the architecture and what teams are focusing and what they might be missing.

Design

Arts

fromdesignboom | architecture & design magazine

1 month agothree suspended digital screens translate AI imagery into luminous spatial installation

UNFOLD PLANE's spatial installation translates artificial intelligence concepts into an integrated architectural environment using suspended screens, dynamic lighting, and reflective surfaces that respond to visitor movement.

fromYanko Design - Modern Industrial Design News

1 month agoLenovo's Yoga Book Concept Makes 3D Models Float Above the Screen - Yanko Design

The upper display renders 3D content without glasses, using Lenovo's PureSight Pro Tandem OLED technology to show depth and spatial volume directly on screen. A spacecraft that's been modeled in three dimensions appears to float, with genuine perceived distance between its front and rear planes, rather than sitting flat behind glass.

Gadgets

fromGameSpot

1 month agoArc Raiders Players Are Seeing UFOs In The Sky

A handful of Arc Raiders players have reported seeing the silhouette of a large ship in the clouds, but so far, only a Reddit user called Bewarden captured the incident in a video. The ship appears to be massive, but there's no indication yet it's confirmation of aliens in this world.

Video games

fromWIRED

1 month agoPico's Project Swan XR Headset Wants to Go Where the Apple Vision Pro Failed

One of the big focuses of the new operating system version is what Pico calls PanoScreen, a feature that lets the wearer run multiple applications at once while also keeping a 360-degree view of the real-world space around them. Other users can pop into the space as 3D avatars while you spin around to see spreadsheets, browser tabs, design software, or whatever else you're working on.

Wearables

fromGadgets 360

1 month agoApple's AI Pendant Said to Use In-House Visual Intelligence Models

According to the latest edition of Gurman's Power On newsletter, the Cupertino-based tech giant is working on its AI visual models to enable the Visual Intelligence features on the rumoured AI pendant, AI smart glasses, and AirPods model with cameras. This will enable the wearables to provide environment-based answers to users and take context-based actions. Gurman adds that Apple intends to make Visual Intelligence and visual models integral to its upcoming wearables.

Apple

Artificial intelligence

fromArchDaily

4 weeks agoCompute Isn't Weightless: AI Infrastructure and the Architecture of the City

AI development is reshaping urban infrastructure and spatial planning in the Greater Bay Area through government-led initiatives that translate computational needs into physical zones, data centers, and specialized districts.

fromComputerworld

1 month agoWith attention shifting to AI smart glasses, VR faces another reality check

"It's not an overstatement to declare another VR winter," said J.P. Gownder, vice president and principal analyst at Forrester. "I think we might even go as far as to say there's only a handful of successful scenarios where people are using VR." This assessment reflects the industry's struggle to find practical applications beyond niche markets.

Gadgets

fromeLearning Industry

2 months agoUtilizing VR And AR In High-Risk Scenarios For Manufacturing Training

Manufacturing environments are becoming more advanced, automated, and electrified-but they are also becoming more dangerous. High-voltage (HV) systems, robotics, advanced machinery, and tightly coupled production lines introduce risks that traditional training methods are no longer equipped to address effectively. Instructor-led classroom training, PDFs, videos, and even supervised shadowing have long been the foundation of manufacturing training. However, when the consequences of error include severe injury, fatal accidents, equipment damage, or production downtime,

Education

fromGSMArena.com

2 months agoRazer unveils an "AI-native" headset with cameras that see what you see

The Motoko's dual first-person-view cameras are positioned at eye level to basically see what you see, enabling real-time object and text recognition - translating street signs, tracking gym reps, summarizing documents on the fly, all of that. There are also dual far and near-field mics, working together to capture voice commands and pick up dialogue within view.

Mobile UX

Real estate

fromLondon Business News | Londonlovesbusiness.com

1 month ago3D site plan rendering: Visualise entire development, landscape and infrastructure - London Business News | Londonlovesbusiness.com

3D site plan rendering converts complex technical plans into instantly readable visualisations that align stakeholders, accelerate decisions, and reduce late-stage project surprises.

Data science

fromLondon Business News | Londonlovesbusiness.com

2 months agoIs Maptive the best mapping software to conduct complex spatial analysis - London Business News | Londonlovesbusiness.com

Maptive delivers cloud-based, no-code spatial analysis and mapping that handles large datasets, automated territories, route planning, and enterprise-grade global mapping infrastructure.

Software development

fromBusiness Matters

2 months ago5 Reasons Why Maptive is The Best GIS Platform for Location Intelligence

Maptive transforms spreadsheet location data into fast, browser-based interactive maps and optimized routes, delivering accessible location intelligence without specialized GIS expertise.

Artificial intelligence

fromArchDaily

1 month agoBeyond the Render: How AI Is Restructuring Architectural Documentation

Invisible, repetitive technical work—specification, detailing, and documentation—sustains buildable, safe architecture and AI can assist by organizing and interpreting this documentation.

fromJakub

2 months agoUsing AI as a Design Engineer

When I work on something, whether it's at Interfere or my personal projects, I like to experiment a lot. Design engineering is a lot about trial and error, and I often spend hours trying to find the "this feels right" moment. This is where AI helps. Instead of spending hours on a concept that I'm unsure of, I try that concept out in a matter of minutes, and throw it away if it doesn't feel right.

Software development

fromeLearning Industry

2 months agoThe Role Of AI In Employee Training And Development

The Learning and Development (L&D) landscape relied on the same standardized programs and inflexible slide decks for decades. This model complied with basic training on a repeat basis. Basic training overshadowed what true talent development could (and should) offer. The pace of business transformation has surpassed our capacity to keep curricula up to date. Today, the old training model isn't just inefficient; it is insufficient.

Online learning

Gadgets

fromYanko Design - Modern Industrial Design News

2 months agoRazer's Project AVA Brings Holographic AI Companions to Your Desk - Yanko Design

Razer's Project AVA is a desk holographic AI that projects a 5.5-inch animated avatar which talks, learns habits, shows facial expressions, and syncs lip movement.

fromEngadget

2 months agoGoogle's Project Genie lets you generate your own interactive worlds

This past summer, Google DeepMind debuted Genie 3. It's what's known as a world world, an AI system capable of generating images and reacting as the user moves through the environment the software is simulating. At the time, DeepMind positioned Genie 3 as a tool for training AI agents. Now, it's making the model available to people outside of Google to try with Project Genie.

Artificial intelligence

fromThe Verge

1 month agoMeta's Quest 3S is $50 off, and includes Batman: Arkham Shadow for free

The Quest 3S can play the same games as the pricier Meta Quest 3, with Batman: Arkham Shadow and Maestro performing impressively well in our testing. Additionally, you can stream Xbox games with a Game Pass subscription and even wirelessly tether it to a gaming PC to play SteamVR games like Half-Life: Alyx. The Quest 3S is powered by the same Qualcomm Snapdragon XR2 Gen 2 processor that's in the pricier Quest 3,

Gadgets

Artificial intelligence

fromArs Technica

2 months agoGoogle Project Genie lets you create interactive worlds from a photo or prompt

Project Genie is a Google research prototype that generates short, remixable 60-second interactive AI video worlds with limitations, content restrictions, and a high subscription cost.

Gadgets

fromGameSpot

2 months agoRazer's New Holographic AI Assistant Sits On Your Desk And Promises Help, Not Judgement

Razer's Project Ava is an AI-powered holographic desktop companion with animated personalities, contextual vision and audio sensing, real-time interaction, and PC-connected USB-C operation.

fromZDNET

2 months agoI didn't need this, but I used AI to 3D print a tiny figurine of myself - here's how

That's today's project. In this article, I'll show you how I started with a picture of me, used some intermediate AI, and turned it into a physical 3D plastic me figurine. Do I need a me figurine? No. Is it cool? Yeah. Does it show off another AI capability? Yep. I'll be honest. I didn't expect my editor to sign off on this pitch.

Artificial intelligence

fromMedium

1 month agoAI's text-trap: Moving towards a more interactive future

LLMs have made AI assistants a standard feature across SaaS. AI assistants allow users to instantly retrieve information and interact with a system through text-based prompts. Mathias Biilmann, in his article " Introducing AX: Why Agent Experience Matters," discusses two distinct approaches to building AI assistants. The Closed Approach involves a conversational assistant embedded directly within a single SaaS product. Examples include Zoom's AI Companion, Salesforce CRM's Einstein, and Microsoft's Copilot. The Open Approach involves external conversational assistants, such as Claude, ChatGPT, and Gemini,

Artificial intelligence

[ Load more ]