#update-orchestration

#update-orchestration

[ follow ]

#kubernetes #devops #ai #security #observability #software-development #cicd #automation #agentic-ai

fromMedium

2 weeks agoModernizing Kubernetes Traffic: A Guide to the Gateway API Migration

If Ingress is the Legacy Path, then the Gateway API is the modern highway. In this guide, I will walk you through a complete migration demonstrating how to swap out your old Ingress controllers for Envoy Gateway. We won't just move traffic; we'll leverage Envoy's power to implement seamless request mirroring and more robust, path-based routing that was previously hidden behind complex annotations.

Web development

Information security

fromDevOps.com

2 weeks agoHarness Extends AI Security Reach Across Entire DevOps Workflow - DevOps.com

Harness launched AI security capabilities including automatic code securing during AI-assisted development and a module discovering, testing, and protecting AI components within applications.

fromInfoWorld

2 weeks agoMigrating from Apache Airflow v2 to v3

Airflow 3 represents a clear architectural direction for the project: API-driven execution, better isolation, data-aware scheduling and a platform designed for modern scale. While Airflow 2.x is still widely used, it is clearly moving toward long-term maintenance (end-of-life April 2026) with most innovation and architectural investment happening in the 3.x line.

Software development

fromTechzine Global

2 months agoDevelopers struggle with container security

Almost a quarter of those surveyed said they had experienced a container-related security incident in the past year. The bottleneck is rarely in detecting vulnerabilities, but mainly in what happens next. Weeks or months can pass between the discovery of a problem and the actual implementation of a solution. During that period, applications continued to run with known risks, making organizations vulnerable, reports The Register.

Information security

fromDevOps.com

3 weeks agoZero Downtime Multicloud Migrations for Observability Control Planes - DevOps.com

An observability control plane isn't just a dashboard. It's the operational authority system. It defines alert rules, routing, ownership, escalation policy, and notification endpoints. When that layer is wrong, the impact is immediate. The wrong team gets paged. The right team never hears about the incident. Your service level indicators look clean while production burns.

DevOps

fromDevOps.com

1 month agoWhat to do About AI's Forced Rethink of Reliability in Modern DevOps - DevOps.com

For years, reliability discussions have focused on uptime and whether a service met its internal SLO. However, as systems become more distributed, reliant on complex internet stacks, and integrated with AI, this binary perspective is no longer sufficient. Reliability now encompasses digital experience, speed, and business impact. For the second year in a row, The SRE Report highlights this shift.

Software development

Software development

fromInfoQ

1 month agoHow a Small Enablement Team Supported Adopting a Single Environment for Distributed Testing

Reusing one development environment with versioned deployments and proxy routing, enabled by a small enablement team and cultural buy-in, scales distributed-system testing.

fromDevOps.com

1 month agoBot-Driven Development: Redefining DevOps Workflow - DevOps.com

Industry professionals are realizing what's coming next, and it's well captured in a recent LinkedIn thread that says AI is moving on from being just a helper to a full-fledged co-developer - generating code, automating testing, managing whole workflows and even taking charge of every part of the CI/CD pipeline. Put simply, AI is transforming DevOps into a living ecosystem, one driven by close collaboration between human judgment and machine intelligence.

Software development

DevOps

fromAmazon Web Services

1 month agoMigrate Amazon EC2 to ECS Express Mode using Kiro CLI and MCP servers | Amazon Web Services

Amazon ECS Express Mode simplifies containerized workload deployment by automating task definitions and service orchestration, reducing manual operational overhead and accelerating migration from traditional EC2 deployments.

fromInfoWorld

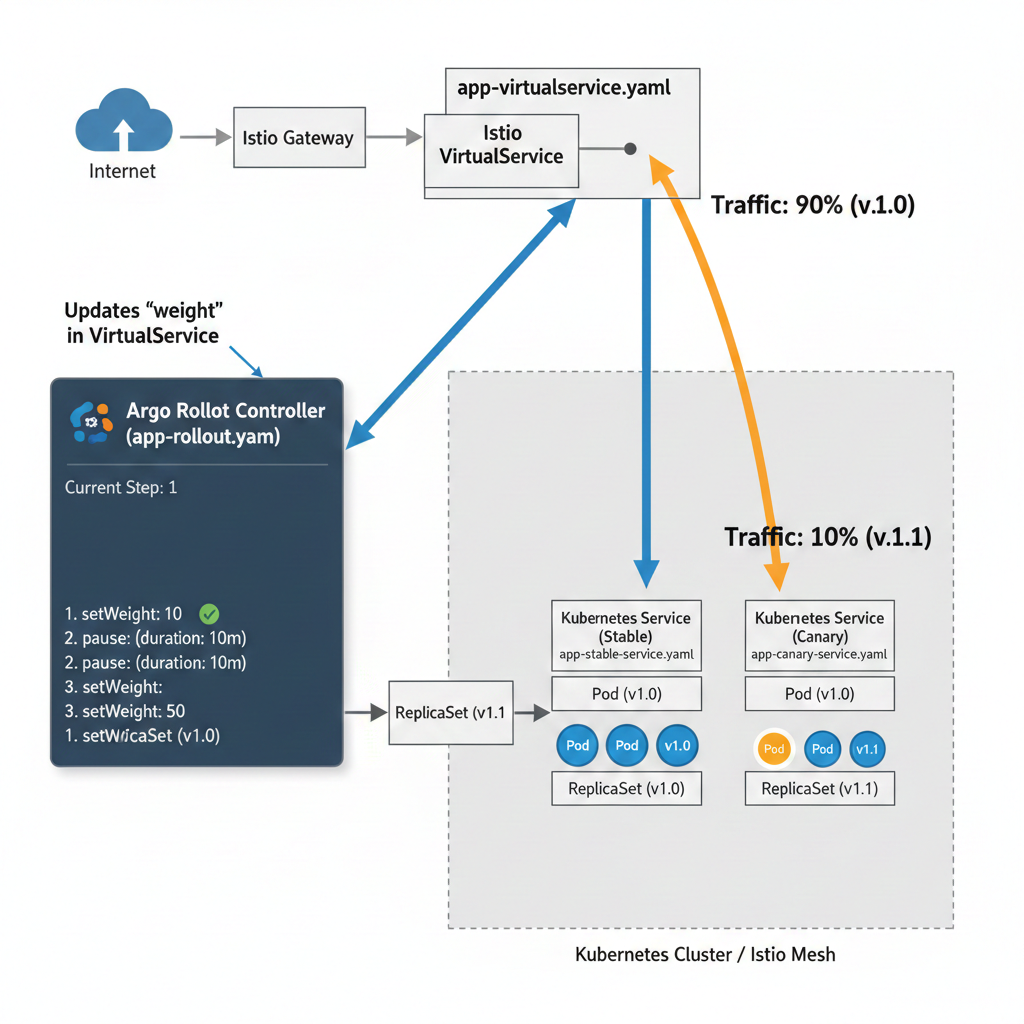

2 months agoWhat is GitOps? Extending devops to Kubernetes and beyond

Over the past decade, software development has been shaped by two closely related transformations. One is the rise of devops and continuous integration and continuous delivery (CI/CD), which brought development and operations teams together around automated, incremental software delivery. The other is the shift from monolithic applications to distributed, cloud-native systems built from microservices and containers, typically managed by orchestration platforms such as Kubernetes.

Software development

fromDbmaestro

4 years ago[VIDEO] End-to-End CI/CD with GitLab and DBmaestro

DBmaestro is a database release automation solution that can blend the database delivery process seamlessly into your current DevOps ecosystem with minimal fuss, and without complex installation or maintenance. Its handy database pipeline builder allows you to package, verify, and deploy, and gives you the ability to pre-run the next release in a provisional environment to detect errors early. You get a zero-friction pipeline, which is often not the case with database delivery process.

Software development

fromDbmaestro

4 years agoWhat is Database Delivery Automation and Why Do You Need It?

Manual database deployment means longer release times. Database specialists have to spend several working days prior to release writing and testing scripts which in itself leads to prolonged deployment cycles and less time for testing. As a result, applications are not released on time and customers are not receiving the latest updates and bug fixes. Manual work inevitably results in errors, which cause problems and bottlenecks.

Software development

fromApp Developer Magazine

1 year agoThe Rise of Agentic Orchestration

According to Tamas Cser, Founder and CEO of Functionize, the industry is on the verge of a structural shift. By 2026, development teams will transition from AI copilots to agentic fleets: coordinated groups of specialized AI agents operating semi-autonomously across the entire software lifecycle. In this new paradigm, engineering excellence is measured less by syntactic mastery and more by the ability to orchestrate intelligent systems-delegating, validating, and refining work continuously, at machine speed.

Software development

fromDevOps.com

1 month agoGas Town: What Kubernetes for AI Coding Agents Actually Looks Like - DevOps.com

Steve Yegge thinks he has the answer. The veteran engineer - 40+ years at Amazon, Google and Sourcegraph - spent the second half of 2025 building Gas Town, an open-source orchestration system that coordinates 20 to 30 Claude Code instances working in parallel on the same codebase. He describes it as "Kubernetes for AI coding agents." The comparison isn't just marketing. It's architecturally accurate.

DevOps

fromDbmaestro

5 years agoDatabase Delivery Automation in the Multi-Cloud World

The main advantage of going the Multi-Cloud way is that organizations can "put their eggs in different baskets" and be more versatile in their approach to how they do things. For example, they can mix it up and opt for a cloud-based Platform-as-a-Service (PaaS) solution when it comes to the database, while going the Software-as-a-Service (SaaS) route for their application endeavors.

DevOps

DevOps

fromInfoWorld

2 months agoFrom distributed monolith to composable architecture on AWS: A modern approach to scalable software

Migrating distributed monoliths to a composable AWS architecture yields loosely coupled, autonomous services that improve scalability, resilience, deployment velocity, and team autonomy.

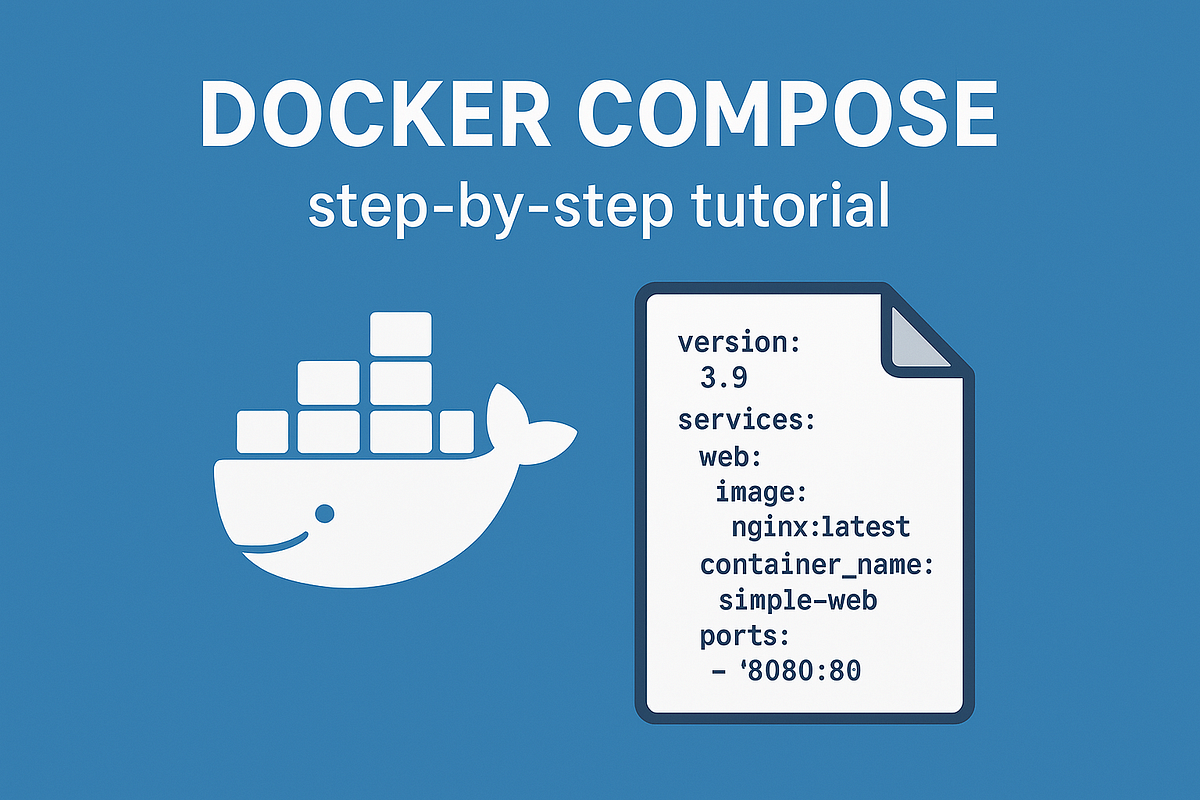

fromMedium

4 months agoCut Your Docker Build Time in Half: 6 Essential Optimization Techniques

Docker builds images in layers, caching each one.When you rebuild, Docker reuses unchanged layers to avoid re-executing steps - this is build caching. So the order of your instructions and the size of your build context have huge impact on speed and image size. Here are the quick tips to optimize and achieve 2 times faster speed building images: 1. Place least-changing instructions at the top

DevOps

DevOps

fromAmazon Web Services

1 month agoBuilding a scalable code modernization solution with AWS Transform custom | Amazon Web Services

An open-source infrastructure enables enterprise-scale, parallel AWS Transform custom code modernizations using AWS Batch, Fargate, REST APIs, and CloudWatch monitoring.

[ Load more ]