#ai-cpus

#ai-cpus

[ follow ]

#ai #nvidia #ai-infrastructure #openai #arm #intel #amd #stock-market #meta #technology #data-centers #semiconductors

fromTechzine Global

1 day agoIGEL OS can now run AI models locally on endpoints

AI Armor provides dynamic runtime security and relies on a central policy engine in the Universal Management Suite (UMS) to meet compliance requirements, ensuring that organizations can manage their security effectively.

DevOps

#ryzen-ai-400-series

Gadgets

fromArs Technica

1 month agoAMD will bring its "Ryzen AI" processors to standard desktop PCs for the first time

AMD's Ryzen AI 400-series desktop processors are repackaged laptop chips with up to 8 CPU cores and Radeon 860M GPUs, targeting business desktops rather than gaming due to high DDR5 memory costs.

Data science

fromTechRepublic

1 month agoInside the Gas Engine Strategy Powering AI's Next Wave

Gas reciprocating engines are emerging as a critical power solution for AI data centers, with manufacturers like Caterpillar securing multi-gigawatt orders to meet demand that exceeds grid and turbine capacity.

Artificial intelligence

fromTechCrunch

2 weeks agoNiv-AI exits stealth to wring more power performance out of GPUs | TechCrunch

AI data centers waste significant power due to GPU demand surges, forcing operators to throttle performance by up to 30%, prompting startups like Niv-AI to develop precision power management solutions.

fromTechRepublic

3 weeks agoMeta's New AI Chips Reveal a Faster, More Self-Reliant Hardware Strategy

Meta is building these chips because buying AI hardware at scale is expensive, and relying too heavily on external suppliers leaves less room to shape that hardware to its own needs. Building more in-house could help the company keep AI costs in check.

Artificial intelligence

Artificial intelligence

fromInfoWorld

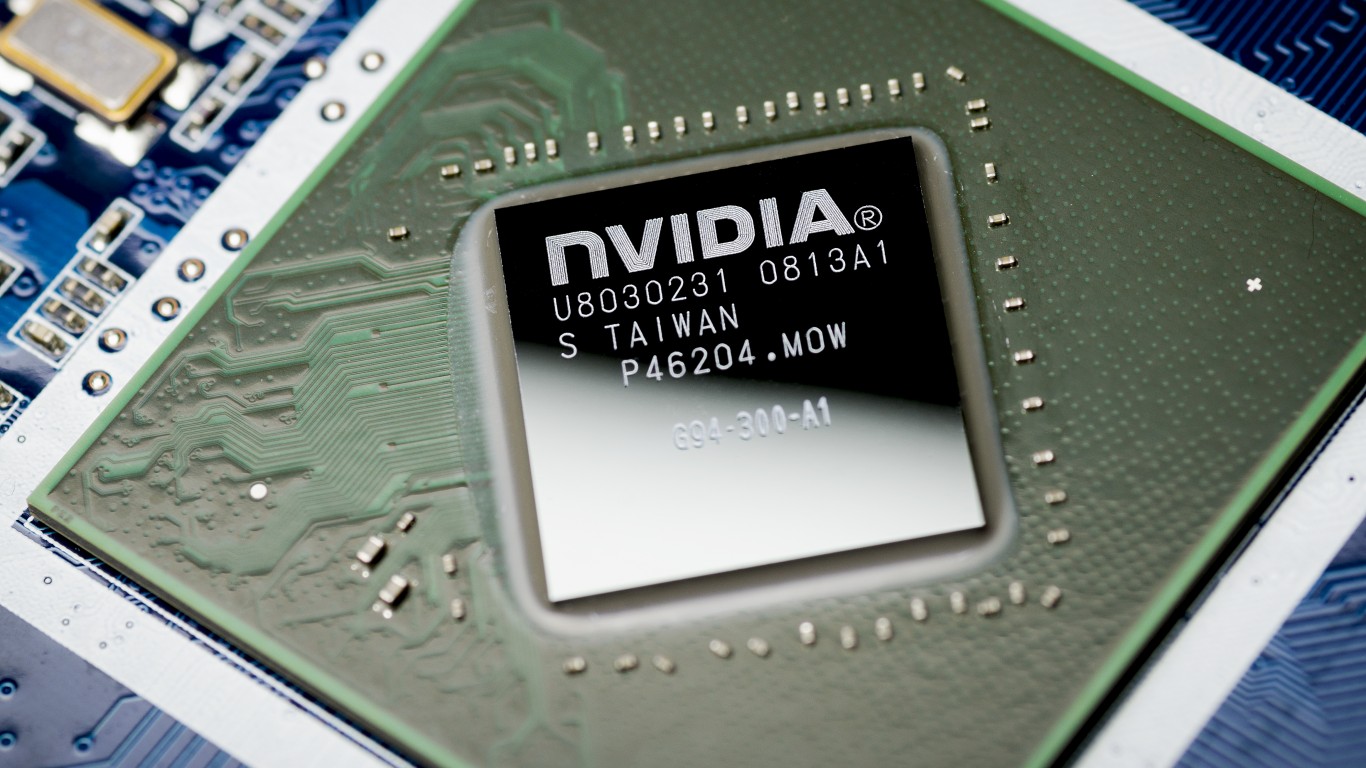

3 weeks agoNvidia launches Nemotron 3 Super to power enterprise AI agents

Nemotron 3 Super's hybrid architecture combining Mamba and Transformer technologies enables enterprises to run complex AI agents more efficiently with lower costs and faster execution on existing infrastructure.

Artificial intelligence

from24/7 Wall St.

1 month agoNVIDIA Cements Its Role as the Backbone of AI Infrastructure

NVIDIA's networking revenue grew 162% year-over-year to $8.2 billion, nearly tripling GPU growth, signaling a shift from chip seller to integrated infrastructure provider selling complete AI data center systems.

fromTheregister

2 months agoUnpacking AMD's latest datacenter CPU and GPU announcements

AMD clarified those estimates are based on a comparison between an eight-GPU MI300X node and an MI500 rack system with an unspecified number of GPUs. The math works out to eight MI300Xs that are 1000x less powerful than X-number of MI500Xs. And since we know essentially nothing about the chip besides that it'll ship in 2027, pair TSMC's 2nm process tech with AMD's CDNA 6 compute architecture, and use HBM4e memory, we can't even begin to estimate what that 1000x claim actually means.

Artificial intelligence

fromTechCrunch

2 months agoQuadric rides the shift from cloud AI to on-device inference - and it's paying off | TechCrunch

The company, which is based in San Francisco and has an office in Pune, India, is targeting up to $35 million this year as it builds a royalty-driven on-device AI business. That growth has buoyed the company, which now has post-money valuation of between $270 million and $300 million, up from around $100 million in its 2022 Series B, Kheterpal said.

Artificial intelligence

[ Load more ]